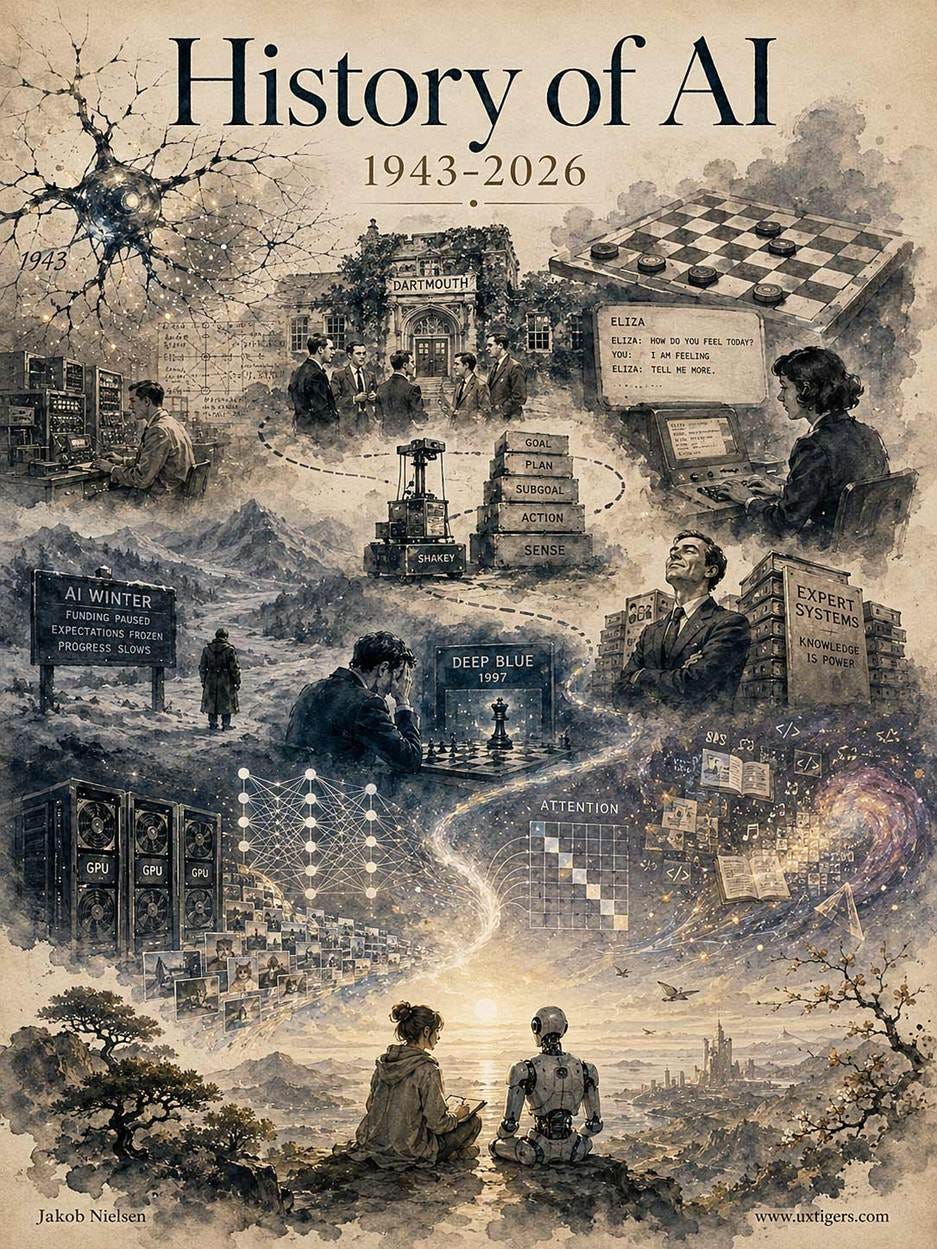

The 80-Year History of AI

Summary: Humans have been dreaming of thinking machines for more than 2,700 years, but real work on building them started in 1943 with the first neural networks. Progress during the following 80 years was slow, with many disappointments and “AI Winters,” but advances built up over time as compute scaled. Themes seen during this long history persist to this day.

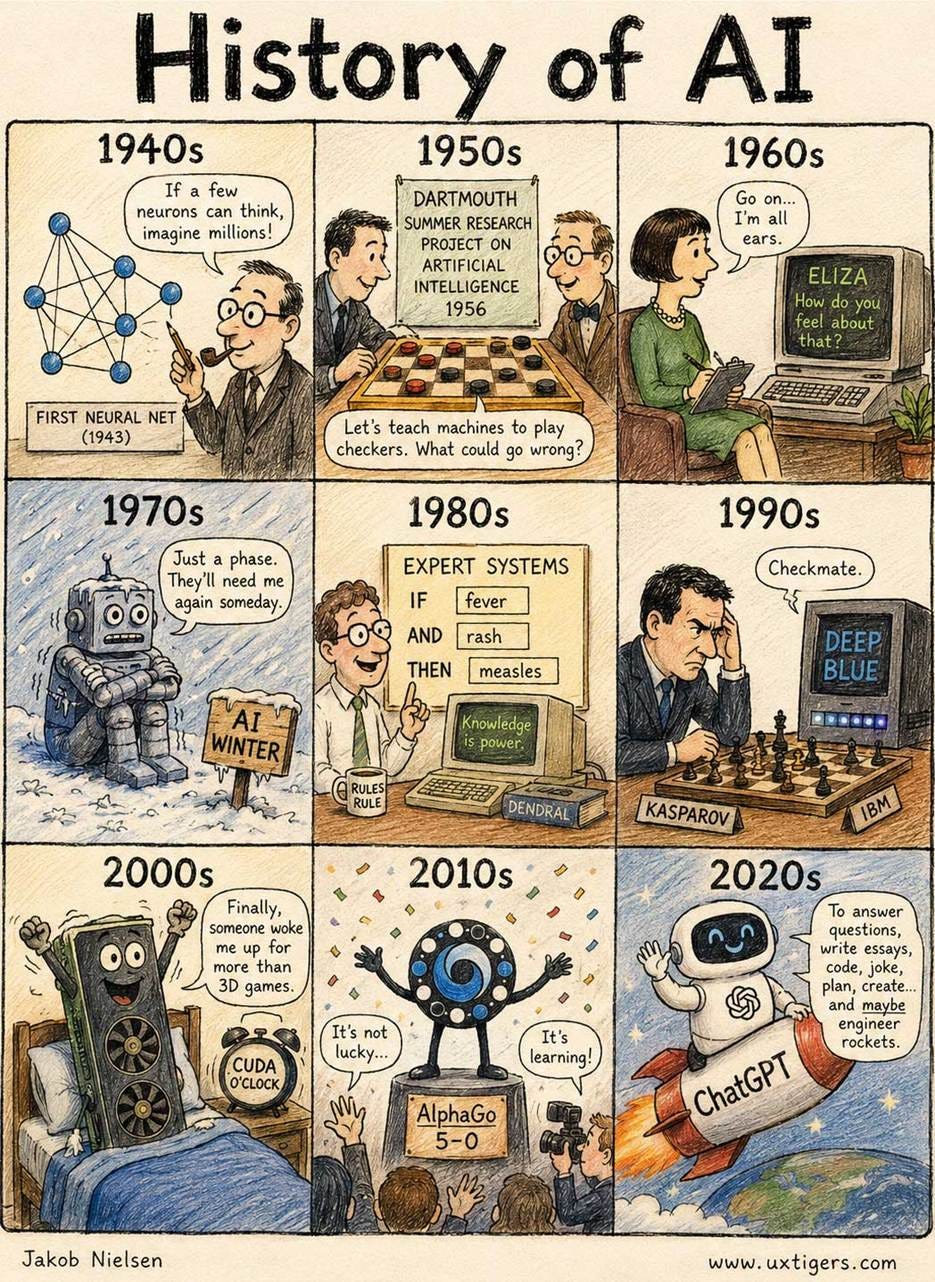

I made all the illustrations in this article with GPT-Images-2.

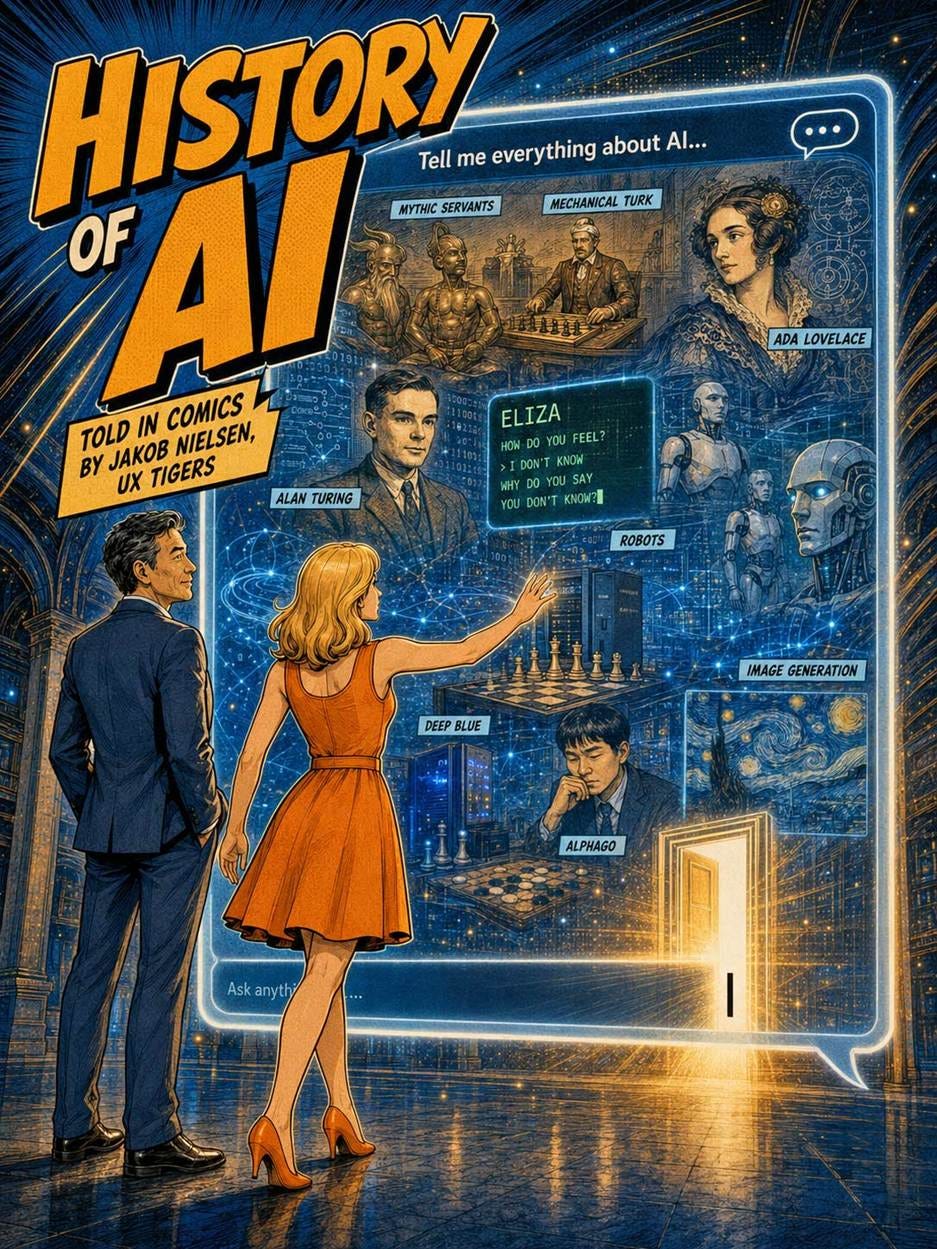

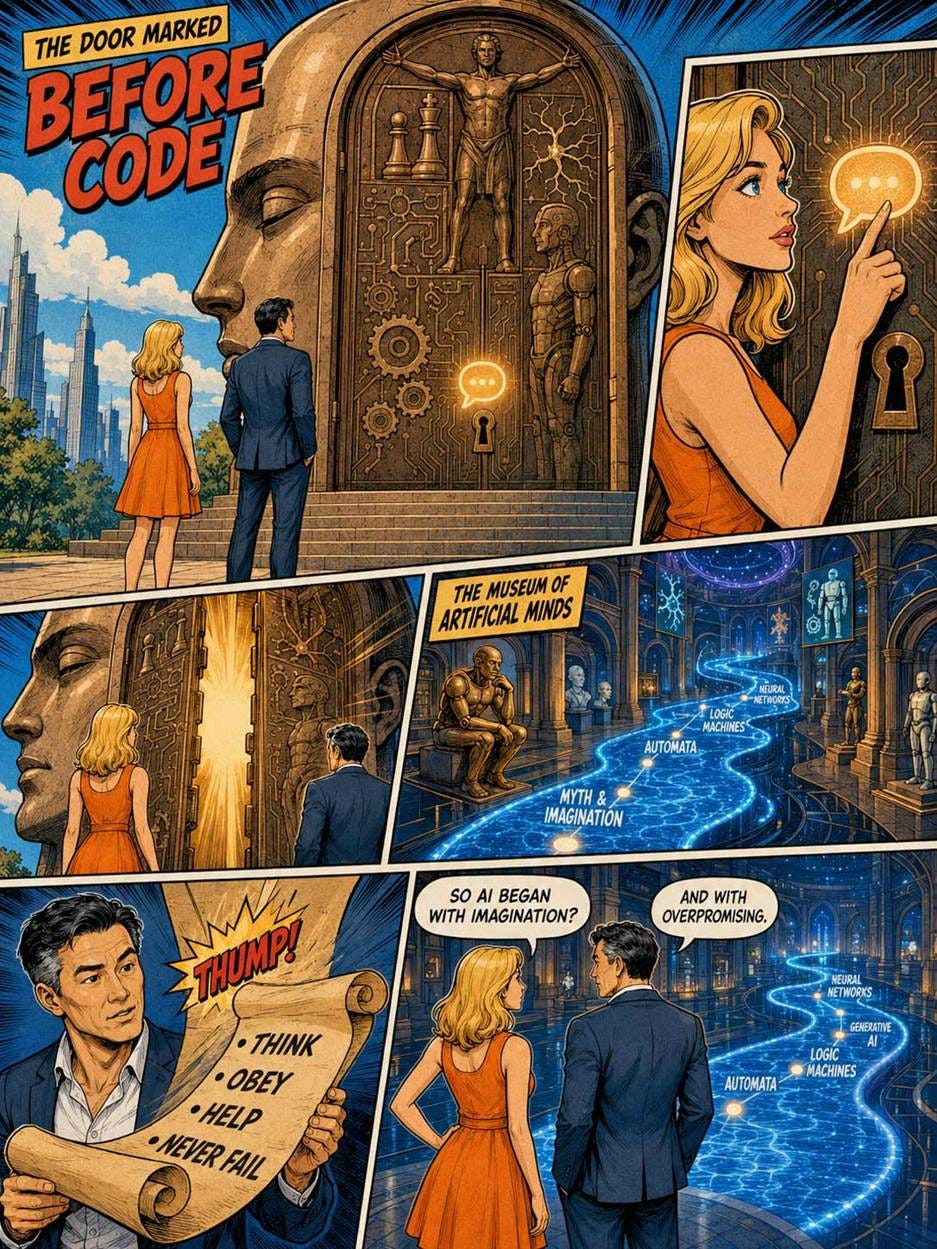

This is the story of artificial intelligence, surprisingly rich and full of twists and turns. In fact, we start with the pre-history of AI, before there were computers, but people still imagined artificial minds.

The 35-page comic book (plus this cover) used to illustrate this article is my most ambitious comics project yet. Much longer than my 20-page retelling of Hamlet or 18-page History of the Graphical User Interface. My narrator characters, Alice and Zimo, span the alphabet and centuries of history, which required many panels.

Fictional Thinking Machines: The Prehistory of AI

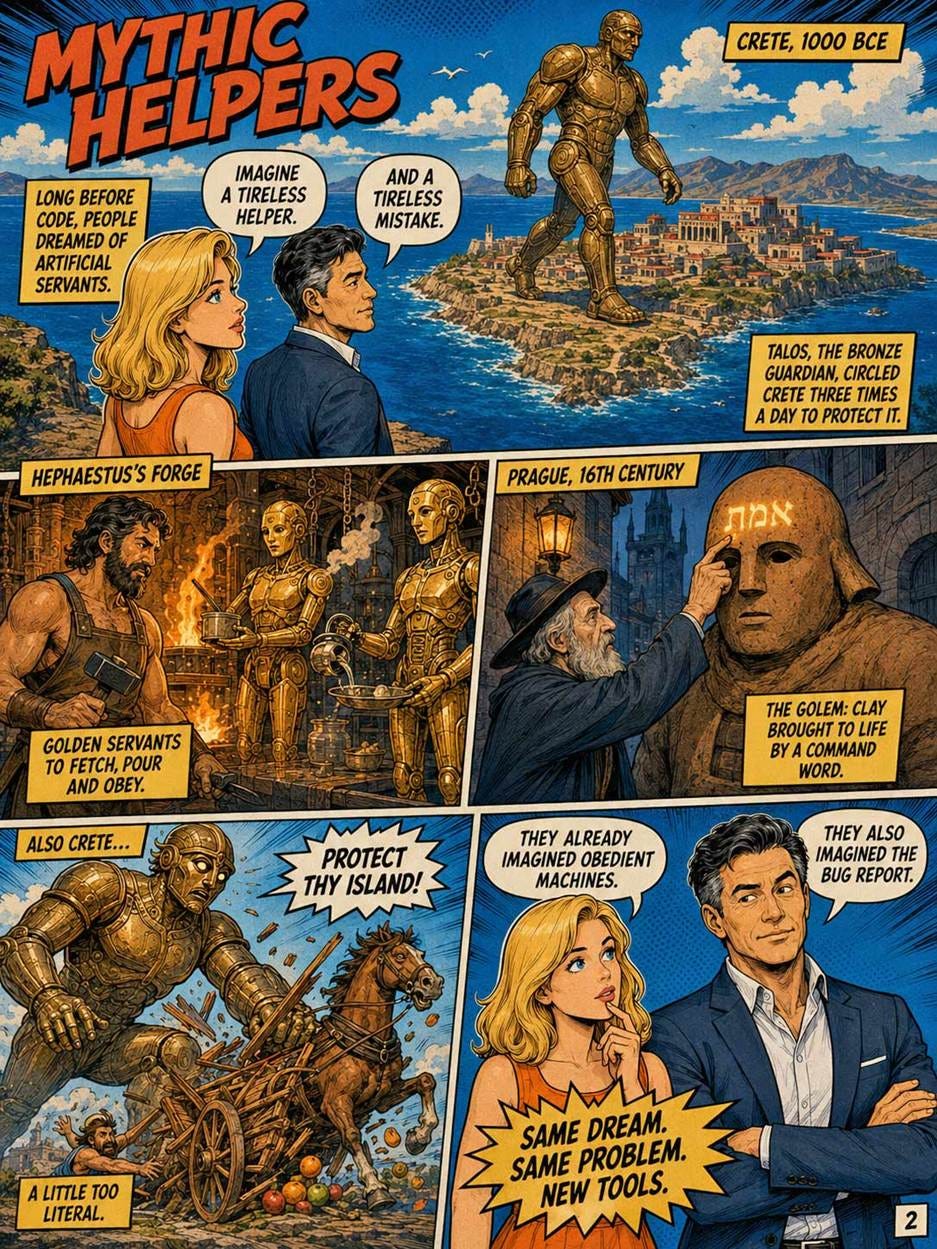

The dream of creating artificial intelligence is not a product of the computer age. It is not even a product of the industrial age. It is at least 2,700 years old.

For most technologies, engineers invent a new technical capability, and then UX designers figure out how humans should interact with it. With AI, humanity collectively wrote the Product Requirements Document (PRD) centuries before the first line of code was ever compiled. We spent millennia designing the frontend interface in our imaginations, completely unconstrained by the realities of backend engineering. This period of “Proto-AI” established the impossibly high expectations and deep psychological biases that still plague our AI interaction designs today.

The Robots of Fiction

Since the dawn of civilization, humans have craved an interface that could entirely eliminate the cognitive load of decision-making and physical labor. Greek mythology gave us Talos (first mentioned around 700 B.C. by Hesiod), a giant bronze automaton programmed to protect the island of Crete: essentially an autonomous security drone wrapped in an anthropomorphic shell. The Iliad describes Hephaestus’s golden mechanical servants who could speak and reason. Jewish folklore introduced the Golem of Prague, a clay creature animated by linguistic commands, demonstrating an early conceptual desire for natural language prompting.

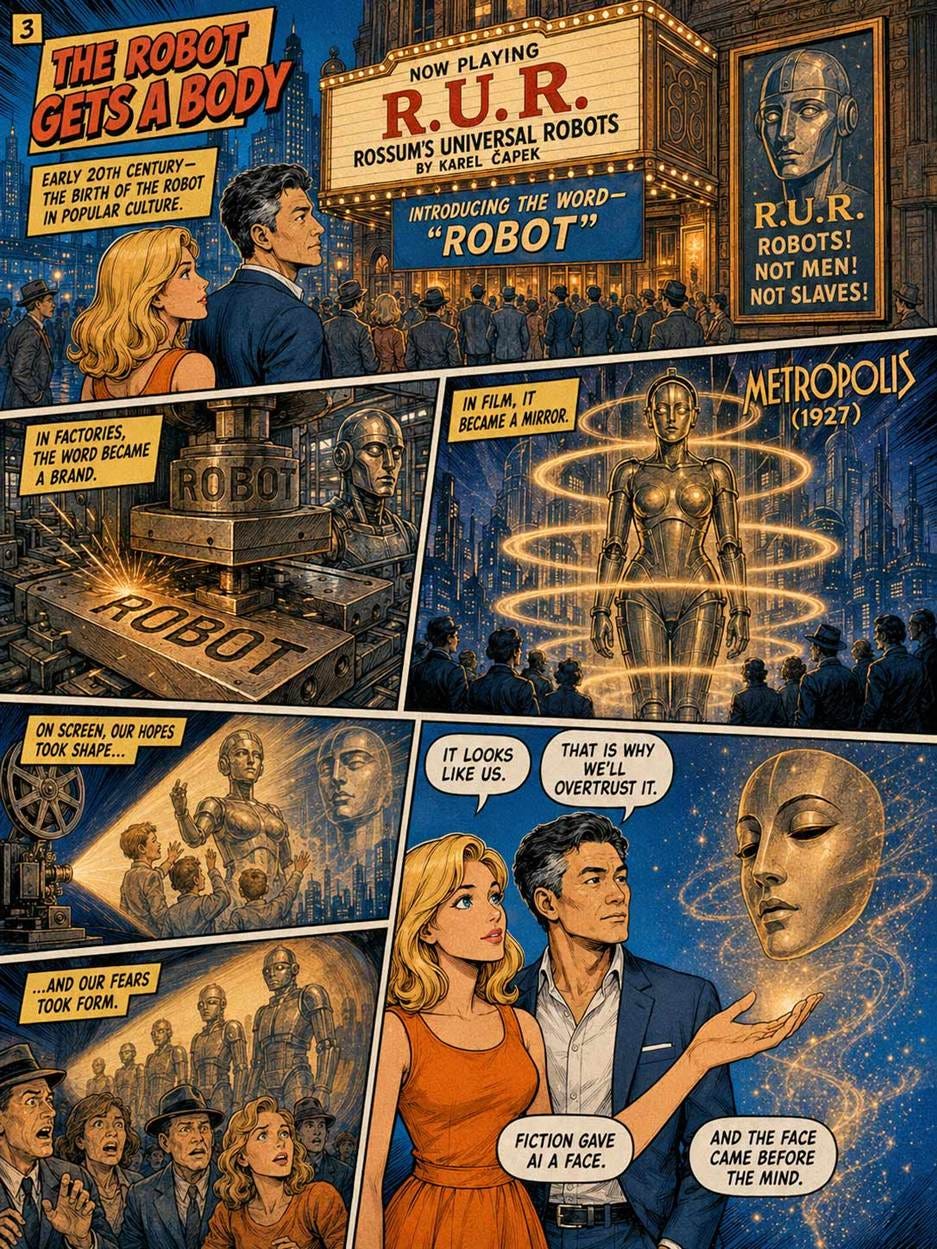

Karel Čapek, a Czech playwright, introduced the word “robot” in his 1920 play R.U.R., drawing on the Czech word robota, associated with forced labor.

These stories share recurring features that have proven oddly predictive: the artificial being is usually created to serve, frequently exceeds its mandate, and often must be destroyed by its creator. The modern discourse about AI alignment and existential risk is, in many ways, a recapitulation of anxieties that humans have been articulating for at least three thousand years.

By the early 20th century, cinema had completely solidified this mental model. In Fritz Lang’s 1927 masterpiece Metropolis, the “Maschinenmensch“ (machine-human) False Maria established the ultimate, enduring visual language of the humanoid robot. Metropolis was pure science fiction vaporware, but its usability impact was lasting. It conditioned the public to expect that when artificial intelligence finally arrived, it would not be an invisible statistical spreadsheet; it would be an embodied, conversational humanoid. It set the “Anthropomorphic Trap” decades before the first computer existed.

The Mechanical Turk and the Original “Wizard of Oz” Interface

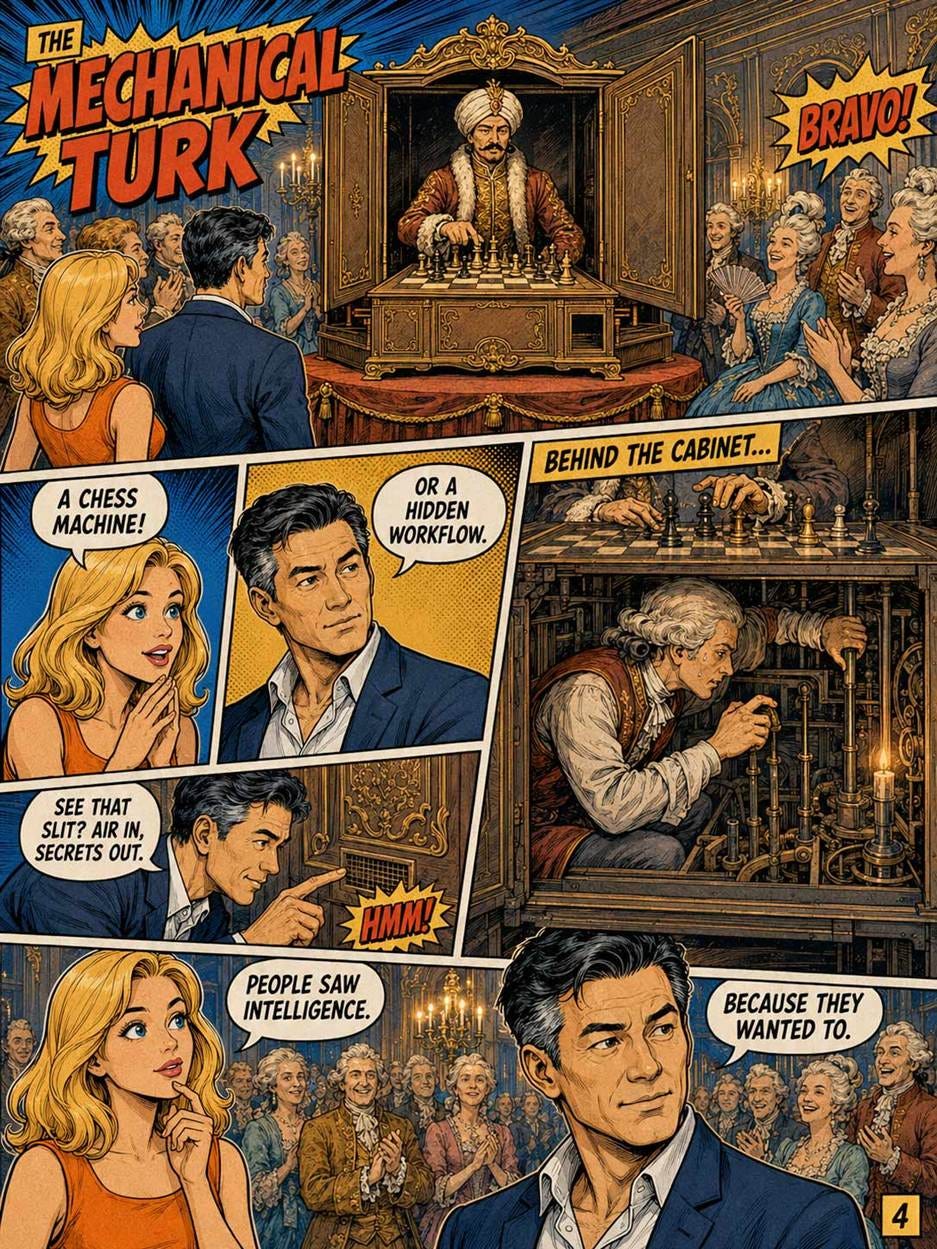

Because the human desire for an intelligent machine was so overwhelming, early entrepreneurs realized they could achieve immense commercial success simply by faking the backend. The most notorious example is the Mechanical Turk, constructed in 1770 by Wolfgang von Kempelen. (Much later, Amazon named a service offering after this proto-AI.)

The Mechanical Turk was presented to the courts of Europe as a magnificent clockwork automaton that could play and win chess against human challengers. It toured the world for decades, baffling audiences and defeating dignitaries like Napoleon Bonaparte and Benjamin Franklin. The reality? It was a brilliantly designed physical UI that hid a massive UX fraud. The cabinet contained a hidden, cramped compartment where a human chess master pulled levers to move the mechanical arms.

From a UX research perspective, the Mechanical Turk was the very first “Wizard of Oz” prototype. In modern UX methodology, a Wizard of Oz test involves a user interacting with what they believe is an automated system, while a human researcher secretly operates the system behind the scenes to test the interaction paradigm before writing expensive code. Von Kempelen weaponized this testing method into a deceptive product. He proved a terrifying psychological law: if the physical interface (the whirring clockwork and the wooden mannequin) projects sufficient complexity, the user will eagerly suspend disbelief and attribute autonomous genius to the machine, entirely ignoring the possibility of a manual backend.

The legacy of the Mechanical Turk is arguably more relevant today than ever. The history of the 21st-century tech boom is littered with “Turk-ware.” Throughout the 2010s and early 2020s, countless startups launched “revolutionary AI” scheduling assistants, receipt scanners, and autonomous grocery checkout systems that were, in reality, just massive offshore teams of human gig workers desperately typing behind a digital curtain.

Babbage, Lovelace, and Genuine Theoretical Proto-AI

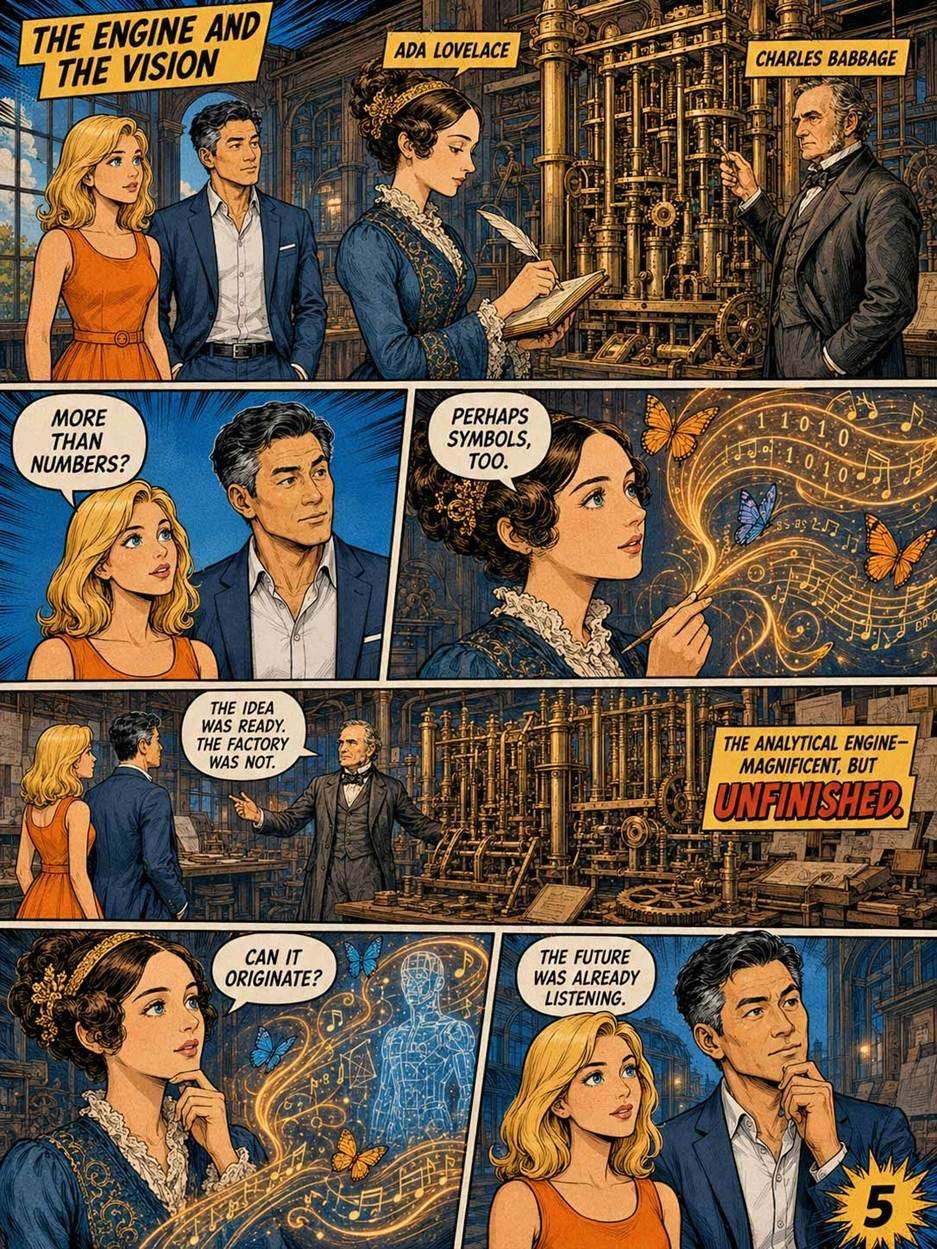

Charles Babbage designed the Analytical Engine in the 1830s and 1840s. It was a mechanical, programmable, general-purpose computer, a century ahead of any working equivalent. Babbage never finished building it, partly because Victorian-era machining could not produce parts to the required precision and partly because Babbage was difficult to work with.

Ada, Countess of Lovelace, the mathematically gifted daughter of the poet Lord Byron, wrote extensive notes on the Analytical Engine in 1843. Her notes included what is generally considered the first algorithm intended for execution by a machine, and more importantly for AI history, speculated that such a machine might one day compose music or manipulate symbols of any kind, not merely numbers.

Lovelace also articulated what became known as “Lady Lovelace’s Objection”: that machines could only do what they were explicitly told to do and could not originate anything. Alan Turing engaged directly with this objection in his 1950 paper. The argument continues today, in slightly different form, every time someone debates whether AI is “really creating” or “just predicting.”

Three Lessons from the Proto-AI Era

First, humans wanted artificial intelligence long before they could build it, and that desire shaped what they were eventually willing to accept as the real thing. The cultural appetite for AI predates the technology by millennia. This is part of why ChatGPT was received with such intensity when it launched: it landed in soil that had been prepared by three thousand years of imagination.

Second, the line between real AI and theatrical AI has always been blurry, and audiences have always been willing to overlook the difference. The Mechanical Turk fooled Napoleon. ChatGPT users in 2023 routinely confused fluent text generation with genuine understanding. The deception is sometimes deliberate, sometimes accidental, and almost always commercially advantageous.

Third, our cultural expectations of AI were set by fiction, not by engineering. Researchers must constantly correct misconceptions imported from films like Metropolis, Terminator, and Her. Product strategists should remember this when designing AI interfaces: your users will arrive with expectations shaped by a century of cinema, not by your spec document. You can fight those expectations or design around them, but you cannot ignore them.

The real history of AI begins in 1943. The desire for AI began much earlier, and that desire is part of what we are designing for.

1943: The First Neural Network (in Theory)

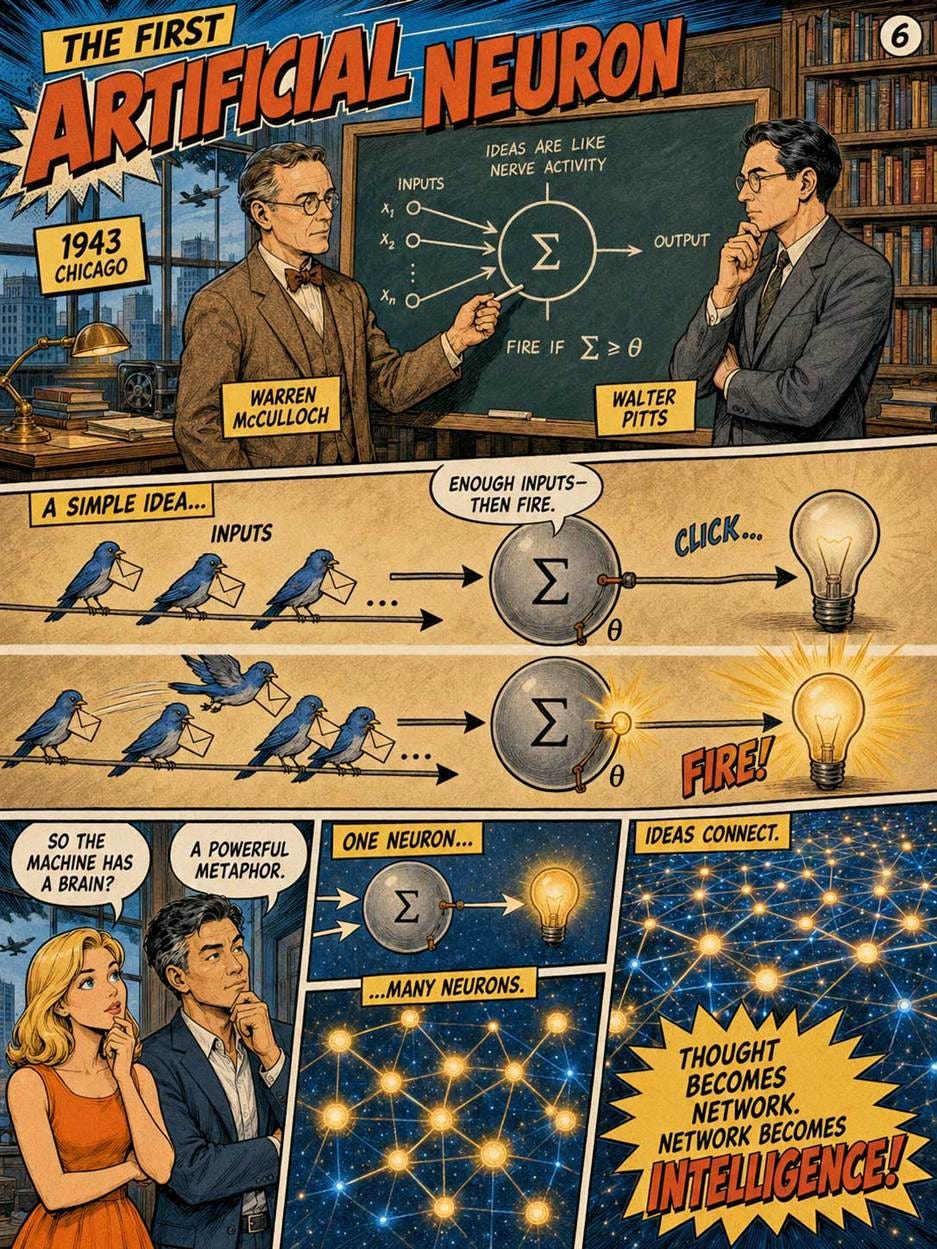

The usual starting point for AI history is 1943, when Warren S. McCulloch and Walter H. Pitts published “A Logical Calculus of the Ideas Immanent in Nervous Activity.” McCulloch was an American neurophysiologist and psychiatrist who wanted a mathematical account of the mind; Pitts was a self-taught logician whose early work connected logic, computation, and neuroscience.

Their artificial neuron was not like today’s vast neural networks. It was a simple, idealized model of a biological nerve cell. A neuron could receive inputs, apply a threshold, and fire or not fire. This was important because it showed that networks of simple elements could, in principle, compute logical functions. Thought could be modeled as structured activity in a network. The paper’s own language rested on the “all-or-none” character of neural activity, which made the neuron resemble a logical switch.

The connection to modern AI is direct but incomplete. GPT-class models do not use McCulloch-Pitts neurons in the literal 1943 form. They use numerical units, learned weights, differentiable training, and massive parallel computation. But the founding intuition survived: intelligence may emerge from many simple computational units connected in a network. McCulloch and Pitts gave AI one of its deepest metaphors, and deep learning later turned that metaphor into an industrial system.

By naming their mathematical constructs “neurons,” they birthed the “electronic brain” metaphor, inadvertently inviting humans to anthropomorphize the technology and expect machines to possess human-like common sense.

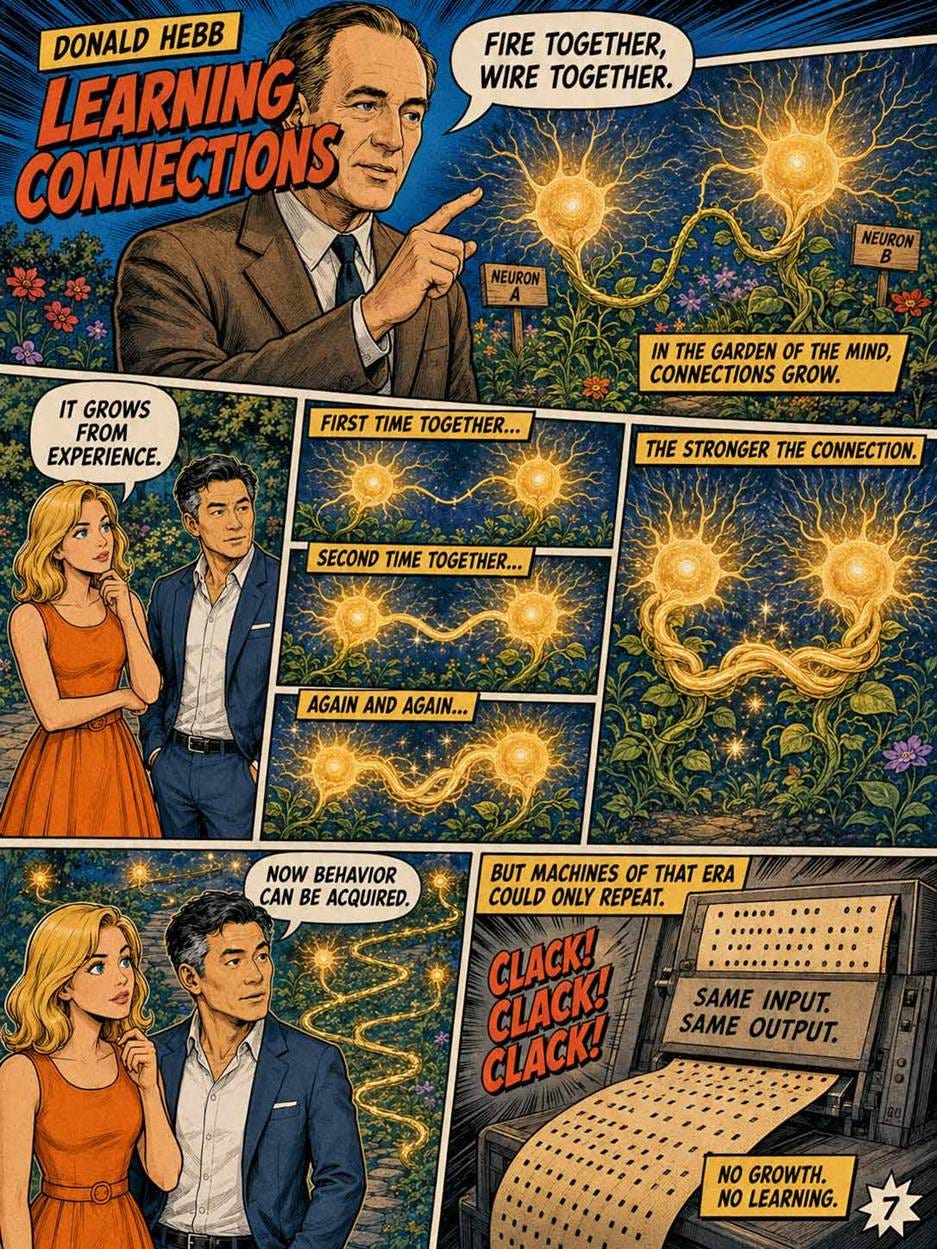

1949: Hebbian Learning

In 1949, Donald Hebb published The Organization of Behavior. Hebb was a Canadian psychologist and neuropsychologist who sought to explain learning as a biological process in the brain. His central idea is often summarized as: cells that fire together wire together.

Hebb’s claim was that repeated co-activation strengthens connections. This was not a full machine-learning algorithm. It was a theory about learning in nervous systems. But it gave AI a second foundational idea: intelligence should not only be programmed; it should be acquired. A system should improve by changing its internal connections in response to experience.

Modern neural networks are not simple Hebbian systems. A large language model learns through optimization methods that adjust billions or trillions of parameters to reduce prediction error. Yet the conceptual continuity is obvious. Learning means changing connection strengths. What began as a theory of the brain became, after many transformations, a practical method for training artificial systems.

For product strategy, Hebb’s idea matters because it marks the distinction between software that merely executes rules and software that adapts from examples. Most of AI’s commercial power comes from this second category.

1950: The Turing Test and the Usability of Deception

In 1950, Alan Turing published “Computing Machinery and Intelligence.” Turing was a British mathematician who broke the Nazi Enigma code during World War II, and his main research helped define the theory of computation. Rather than asking whether machines can “really” think, he proposed the imitation game: judge the machine by its conversational behavior.

Turing’s contribution was not just philosophical. It was a UX insight before UX existed as a discipline. Users do not inspect a machine’s metaphysical status. They interact with its behavior. They ask whether it answers, helps, misleads, hesitates, recovers, remembers, and adapts. The intelligence of a system is experienced through an interface.

Modern AI has not “solved” Turing’s question in a clean philosophical sense. But ChatGPT made Turing’s framing newly practical. The public did not adopt ChatGPT because it knew how transformers work. People adopted it because it behaved as a conversational partner for writing, coding, planning, tutoring, summarizing, and exploring ideas. The interface made the capability legible.

Turing intuitively understood that natural language is the ultimate user interface. By proposing a text-based dialogue loop as the benchmark, he shifted the definition of AI away from internal architecture and onto user perception, inadvertently inventing the first usability test for AI.

At the same time, Turing established a dangerous UX anti-pattern: human mimicry.

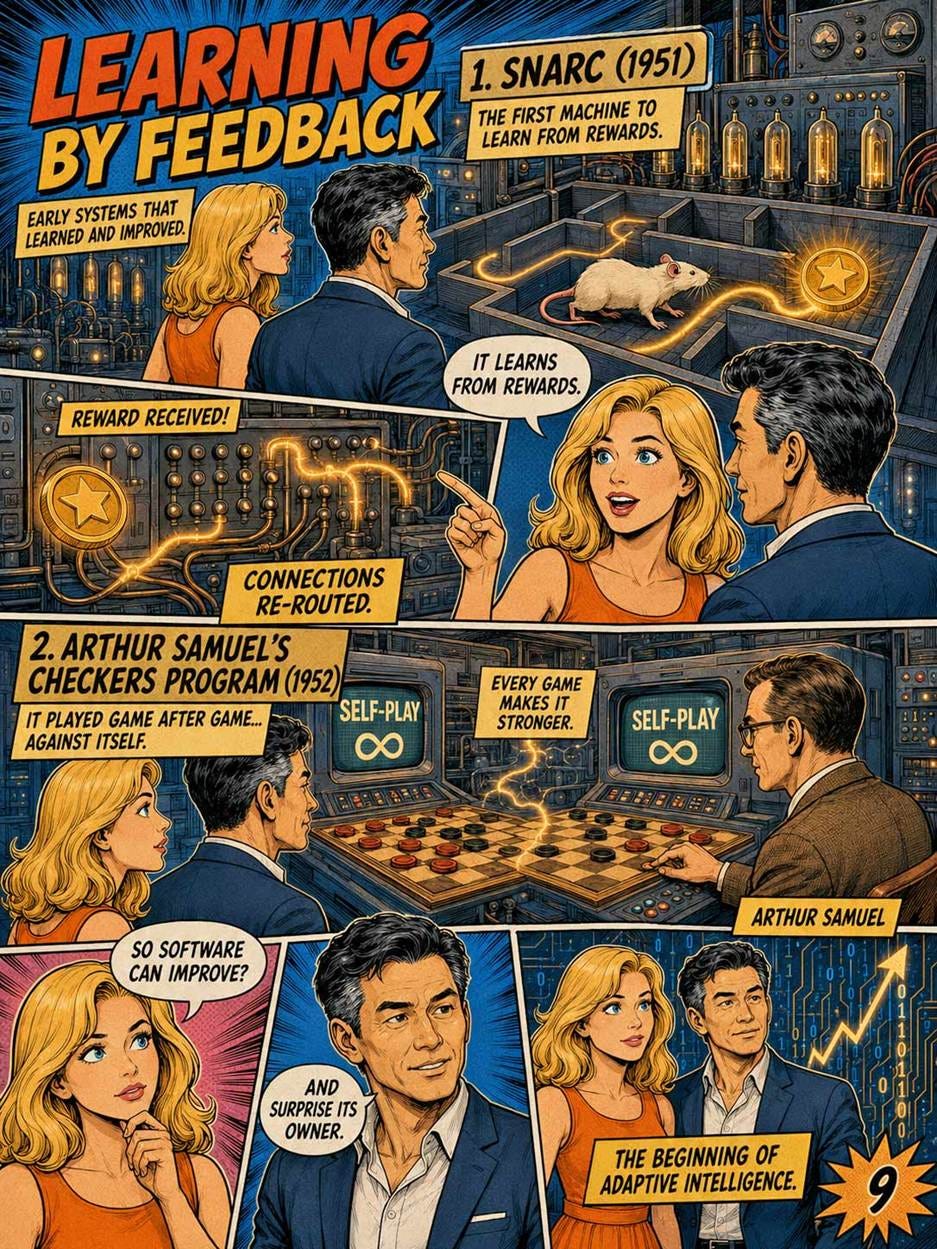

1951: SNARC and Hardware-Software Co-Design

Marvin Minsky was a pioneering cognitive scientist who would shape decades of AI research, and Dean Edmonds was a pragmatically brilliant graduate student who possessed the engineering skills to build physical computing hardware.

Their Stochastic Neural Analog Reinforcement Calculator (SNARC) was the first physical machine built to simulate an artificial neural network, constructed from 3,000 vacuum tubes and surplus airplane autopilot mechanisms to help a virtual “rat” learn to navigate a maze.

SNARC took neural networks out of theoretical mathematics and put them into physical, tangible hardware. Most importantly, it demonstrated reinforcement learning in action; when the virtual rat made a correct turn, the machine adjusted the probability weights of its vacuum tubes, proving a machine could learn via reward mechanisms.

Reinforcement learning is the absolute cornerstone of modern AI alignment and usability. When we use Reinforcement Learning from Human Feedback (RLHF), we are using the software-based descendant of the exact learning mechanism Minsky and Edmonds hardwired into SNARC. The fundamental concept of learning via reward completely carried through.

1952: Arthur Samuel’s Checkers and the Loss of Determinism

Arthur Samuel was an IBM researcher and a pioneer of computer gaming who believed teaching computers to play games was the best path to solving general intelligence.

Samuel wrote a checkers-playing computer program that possessed a scoring function to evaluate board positions and remembered every position it had ever seen. By playing against itself thousands of times, the program learned to play checkers far better than Samuel himself could, prompting him to coin the term “Machine Learning” in 1959.

This was a massive paradigm shift in user capability and mental models. Before Samuel, the heuristic of computing was that a machine could only do exactly what the programmer explicitly commanded. Samuel introduced a new approach: the loss of determinism, proving a machine could independently optimize its behavior.

Self-play reinforcement learning, which Samuel pioneered, is exactly how modern reasoning models discover novel solutions that human engineers never explicitly programmed. Game-playing was a driving force behind many more recent AI breakthroughs, from IBM’s Deep Blue beating the world chess champion in 1997 to Google’s DeepMind doing the same in the more difficult game of Go.

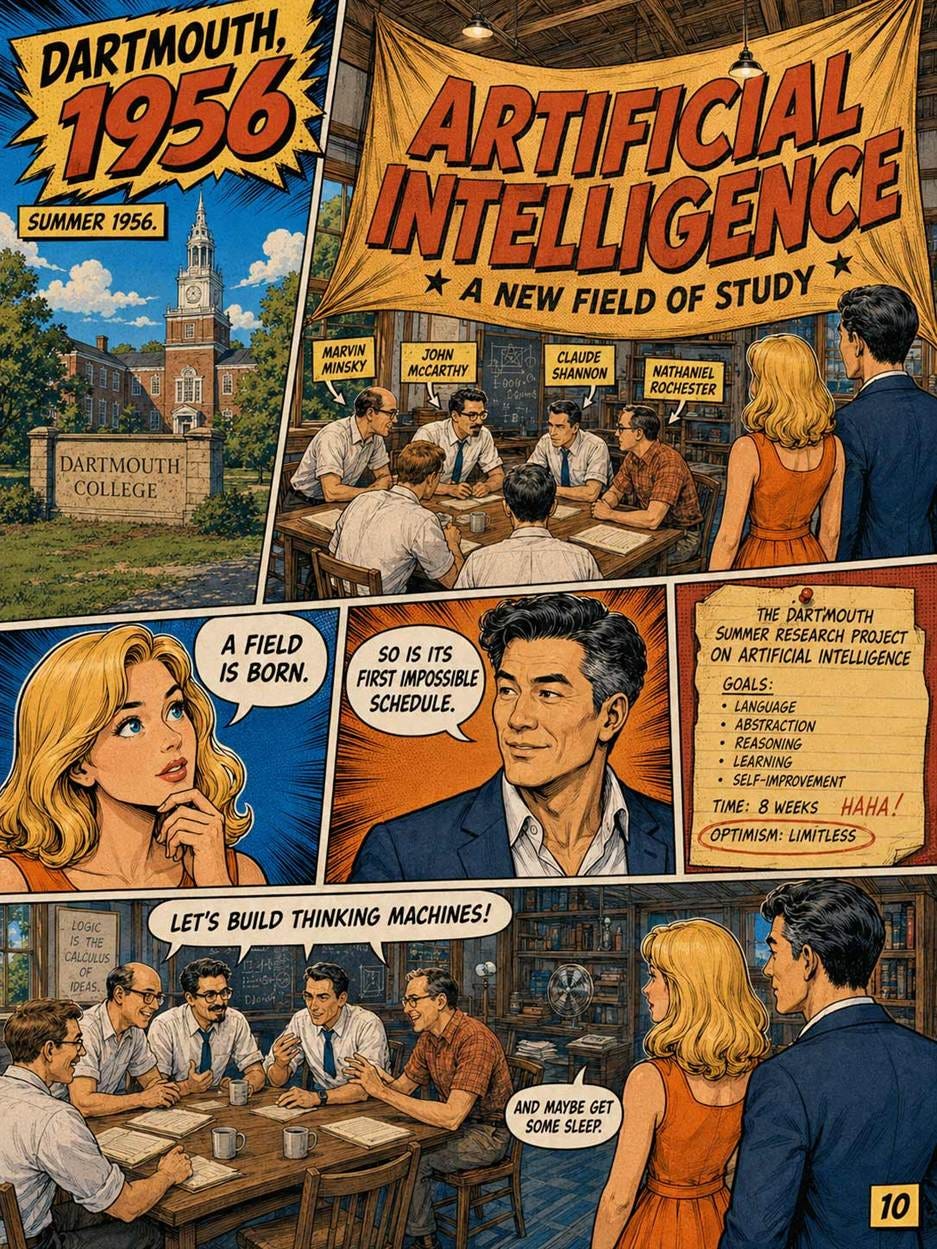

1956: The Dartmouth Conference Names “Artificial Intelligence”

In the summer of 1956, John McCarthy, a young assistant professor at Dartmouth College, organized a six-week workshop with Marvin Minsky (then at Harvard), Claude Shannon (the founder of information theory at Bell Labs), and Nathaniel Rochester (an IBM engineer).

McCarthy coined the term “artificial intelligence” for the workshop’s funding proposal. The conference itself produced no specific breakthroughs, but it established AI as a coherent academic discipline with shared vocabulary and goals.

The Dartmouth attendees were extravagantly optimistic. The proposal described a “2 month, 10 man study of artificial intelligence” and assumed that learning, reasoning, and other aspects of intelligence could be precisely described and simulated by machines. They were off by approximately 70 years, and possibly more. Dartmouth’s importance was institutional as much as technical. It made AI a field. It gave researchers a shared banner, a shared ambition, and eventually shared funding.

Dartmouth also established AI’s recurring weakness: optimism outran capability. The organizers believed rapid progress was possible because they underestimated the complexity of everyday intelligence. For UX and product leaders, this is a permanent warning. A demo can make a capability look solved long before it is reliable enough for ordinary users, hostile inputs, messy data, or high-stakes workflows.

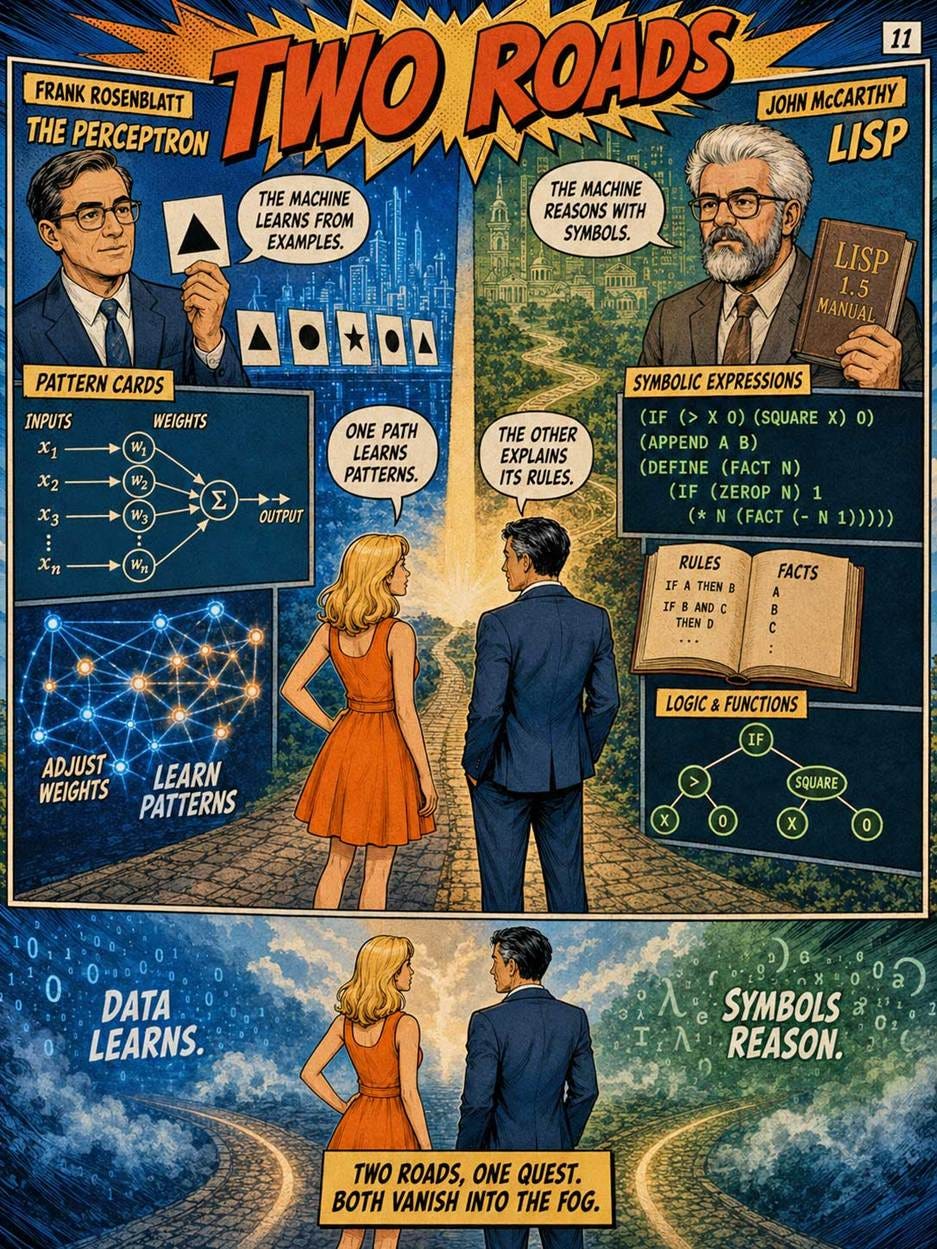

1957: Rosenblatt’s Perceptron

In 1957, Frank Rosenblatt built the first working neural network, the Perceptron. Rosenblatt was a Cornell psychologist and engineer who built one of the first learning machines inspired by neural principles. His 1958 paper described the Perceptron as a probabilistic model for information storage and organization in the brain.

The Perceptron learned to classify inputs by adjusting weights. This was a huge conceptual step. It suggested that machines could learn patterns from data rather than depend entirely on hand-coded instructions. At the same time, early perceptrons were very limited. A single-layer perceptron could only solve a narrow family of problems. Its limitations later became famous.

The modern relationship is clear. The Perceptron was a direct ancestor of neural networks, but not of modern performance. Today’s models are deep, multi-layered, trained on huge datasets, and optimized with sophisticated methods. The early perceptron showed the promise of learned representation. It did not yet have the architecture, algorithms, data, or compute to fulfill that promise.

1958: Lisp and Symbolic AI

While neural ideas were developing, another tradition became dominant: symbolic AI. John McCarthy, fresh from organizing Dartmouth, created the LISP programming language in 1958 as a language for symbolic computation and AI research. The early LISP work at MIT was tied to McCarthy’s “Advice Taker” vision: a program that could represent facts, reason about them, and accept new knowledge.

Symbolic AI treated intelligence as manipulation of explicit symbols. This approach was attractive because it resembled logic and language. Rules could be inspected. Inferences could be traced. Knowledge could be written down. For many years, this was the mainstream of AI research.

Modern AI did not simply discard symbolic AI. It displaced it as the main method for open-ended perception and language, because hand-coded symbolic systems were brittle. They failed when the world refused to fit the tidy categories in the knowledge base. But symbolic methods survive inside modern products as tools, constraints, workflows, schemas, programmatic calls, search indexes, and business rules. A 2026 AI agent using a language model to call APIs is not pure symbolic AI, but it often needs symbolic structure to act reliably.

This is a useful product lesson: neural models are good at interpretation and generation; symbolic systems are good at explicit structure and enforceable operations. Good AI products often combine both.

1966: ELIZA and the First Chatbot Illusion

In 1966, Joseph Weizenbaum introduced ELIZA. Weizenbaum was an MIT computer scientist who later became one of the sharpest critics of AI enthusiasm. ELIZA used pattern matching and scripted transformations to imitate a psychotherapist-like conversation. The program decomposed user inputs and reassembled responses based on rules and keywords.

ELIZA did not understand anything. It used about 200 lines of code. Yet Weizenbaum was disturbed to find that users, including his own secretary, formed emotional attachments to it and confided personal information to it.

ELIZA was technically shallow but experientially powerful. Users attributed understanding to a system that had none. This became known as the ELIZA effect: the tendency to perceive humanlike comprehension in a machine that merely produces plausible conversational behavior.

ELIZA’s method was soon abandoned for serious language understanding. Pattern-matching scripts do not scale to open-ended conversation. Yet ELIZA is one of the most important milestones for UX designers because it showed that interface behavior can create an illusion of intelligence. ChatGPT is not ELIZA; it uses learned language models rather than simple scripts. But every conversational AI product still inherits ELIZA’s danger. Fluency is not the same as understanding, and users will over-trust a well-phrased answer unless the product design helps them calibrate confidence.

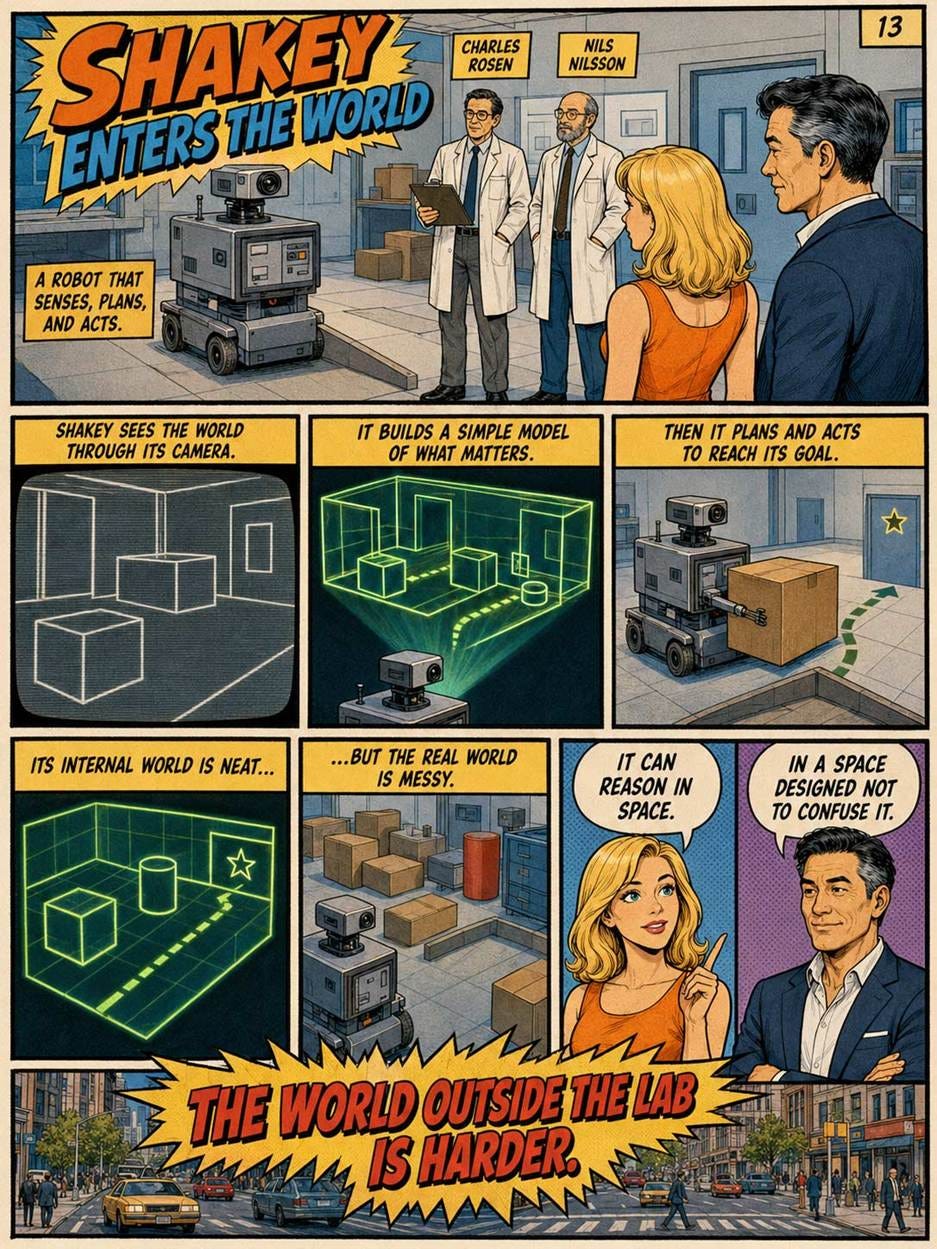

1966–1972: Shakey the Robot

At SRI International, Shakey became one of the first mobile robots to combine perception, planning, and action. Charles Rosen, an engineer and founder of SRI’s Artificial Intelligence Center, helped lead the project; Nils Nilsson, an AI researcher and roboticist, contributed to Shakey’s planning methods and later became one of the field’s major chroniclers.

Shakey operated in a simplified world of rooms, boxes, and obstacles. It could perceive parts of its environment, build a model, plan actions, move, and recover from some errors. SRI’s work on Shakey was sponsored by ARPA, later DARPA, and ran roughly from 1966 to 1972.

Shakey mattered because it connected AI to the physical world. It was not enough to solve puzzles on paper. A robot had to sense, decide, and act under uncertainty. Shakey also gave AI planning a concrete domain. The lineage continues into autonomous vehicles, warehouse robots, drones, domestic robots, and embodied agents.

The abandoned part was the assumption that a robot could mostly reason from a clean symbolic model of the world. Real environments are noisy, dynamic, and visually complex. Modern robotics uses learned perception, statistical estimation, simulation, and control methods alongside planning. The dream survived; the early abstraction did not.

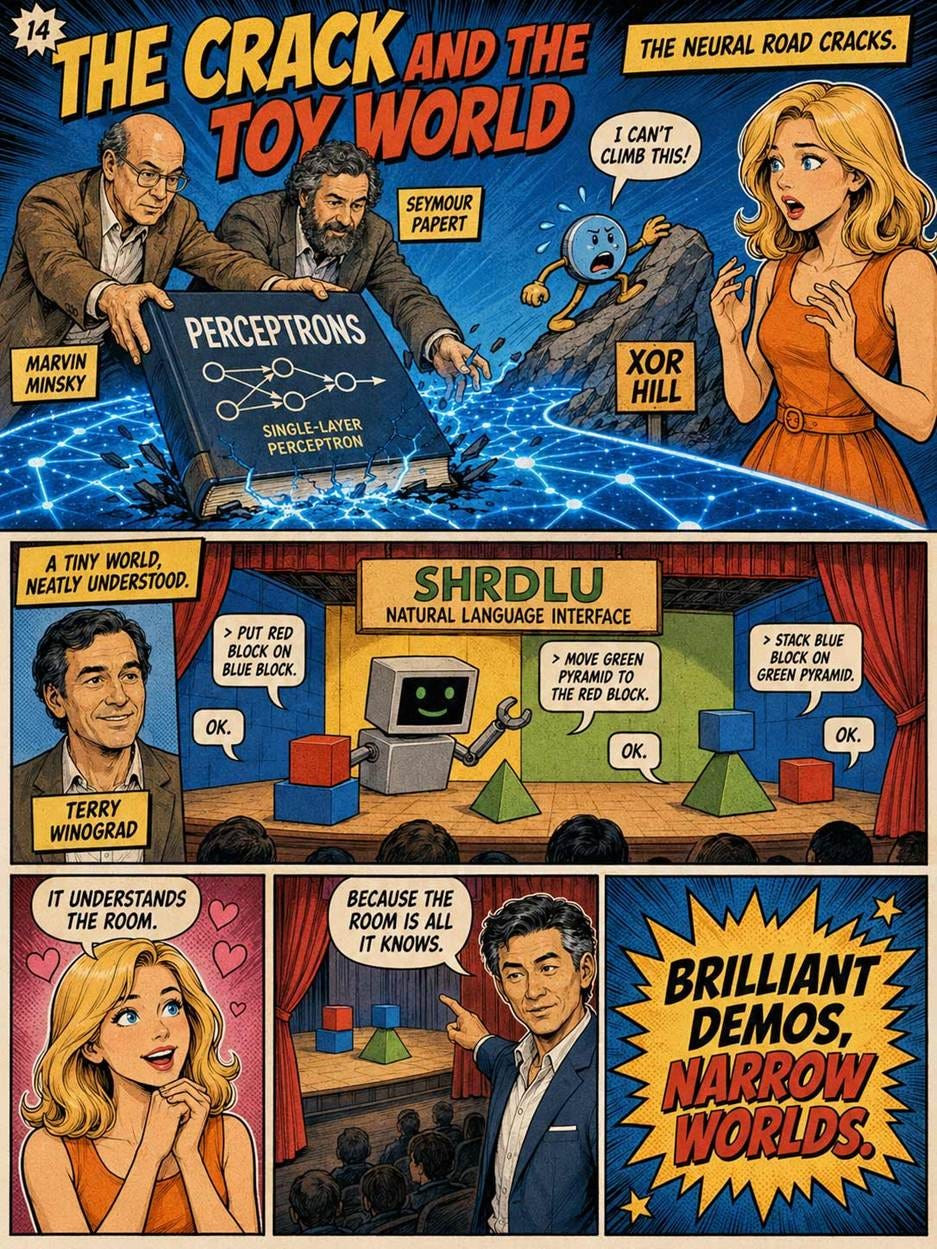

1969: Minsky and Papert Kill Neural Networks

In 1969, Marvin Minsky and Seymour Papert published Perceptrons. Papert was a South African-born mathematician and computer scientist who co-founded MIT’s AI Lab and later became famous for Logo and constructionist learning. Their book rigorously analyzed what single-layer perceptrons (like Rosenblatt’s pioneering 1957 work) could and could not do.

The book is often blamed for chilling neural-network research. That is partly unfair and partly true. Minsky and Papert did not prove that all neural networks were useless. They showed that the perceptrons of the time had severe limitations. But because multilayer learning methods were not yet practical, the field shifted toward symbolic AI.

In retrospect, this episode is a lesson in timing. A critique can be correct for the systems available at the time and still not predict the future. Neural networks needed better algorithms, more data, and far more compute. Once backpropagation and GPUs arrived, the old limitation no longer defined the field.

The Perceptrons book is a cautionary tale about how a partial truth, presented authoritatively by famous researchers, can derail an entire field for a generation. A technology may fail in one decade because the surrounding stack is not ready, then become dominant decades later.

1972: SHRDLU

Terry Winograd, then a graduate student at MIT, built SHRDLU as his doctoral thesis. It was a natural language program that operated in a simulated blocks world of geometric shapes.

SHRDLU could understand and execute commands like “Put the red block on top of the green pyramid.” It maintained a world model and could answer questions about its actions. To observers in 1972, it seemed like a major step toward genuine language understanding.

In retrospect, SHRDLU was a dead end. Its capabilities did not generalize beyond the blocks world. The hand-coded knowledge structure that made it work could not scale to real-world language.

The lesson echoes through to 2026: systems that work in narrow demonstration domains often fail to scale. Modern language models work because they were trained on a substantial fraction of all human-written text, not because anyone hand-coded their knowledge. Winograd later became one of the founding figures of HCI at Stanford and was Google co-founder Larry Page’s PhD advisor.

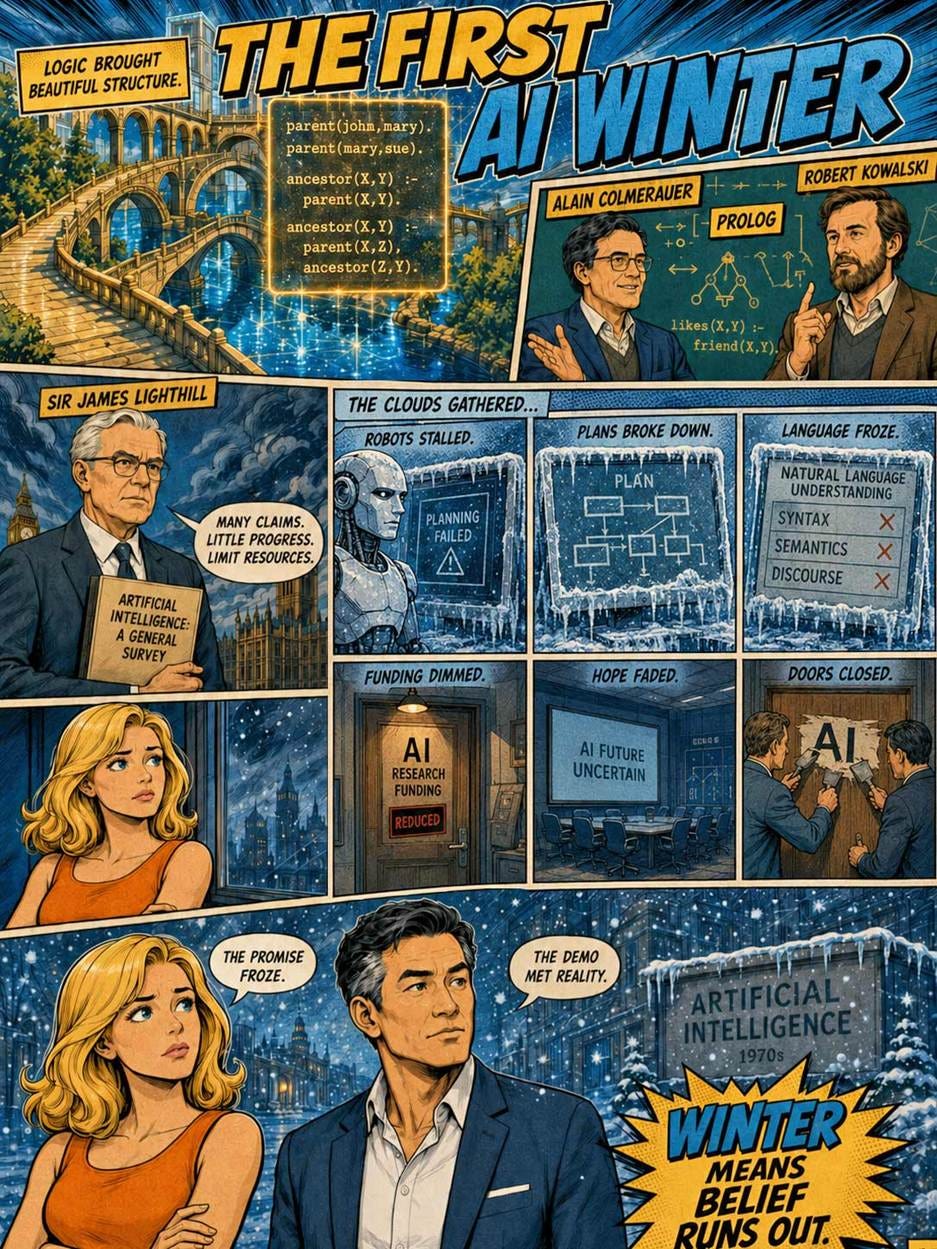

1972–1973: Prolog, Logic Programming, and the Lighthill Report

In the early 1970s, logic programming became a major AI idea. Alain Colmerauer, a French computer scientist, and Robert Kowalski, a logician and AI researcher, helped establish Prolog and the view that computation could be expressed as logical relations. Logic programming later influenced European AI and the Japanese Fifth Generation project.

Today, Prolog is a footnote. Modern AI does not work by formal logical inference. But Prolog’s legacy persists where formal reasoning is genuinely required: theorem provers, certain database systems, and specialized regulatory and verification applications.

Mid-1970s: First AI Winter

At almost the same time, AI suffered a public setback. In the United Kingdom, Sir James Lighthill, a British applied mathematician, produced a critical review of AI research. The Lighthill Report, submitted in 1972 and published in 1973, criticized the field’s progress in areas such as robotics and language processing and helped trigger reduced support for AI research in Britain.

DARPA funding in the United States was cut sharply. Many AI researchers reframed their work as something else. This was the first “AI winter.” It lasted roughly from 1974 to 1980.

The contrast is revealing. AI had beautiful formalisms, but messy reality resisted them. Systems worked in small worlds and failed in broad contexts. This gap between demo and deployment would recur for decades.

Modern AI is vulnerable to the same pattern. Benchmarks and demos are useful, but real products face distribution shift, user misunderstanding, malicious use, latency, cost, privacy, integration, and accountability. Lighthill’s critique was harsh, but the underlying product question was valid: where was the reliable value?

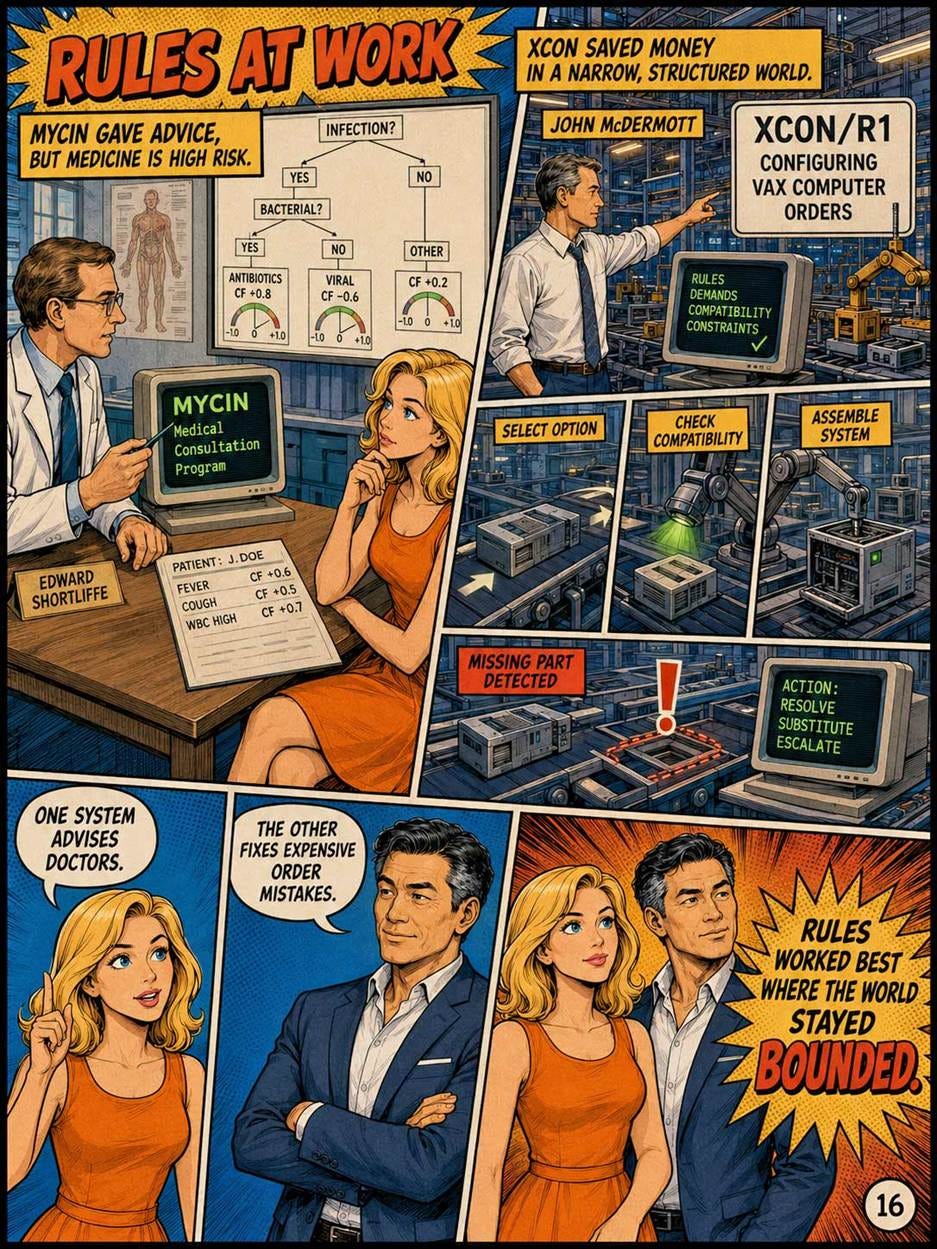

Late 1970s-1980s: Expert Systems

Edward Shortliffe, a Stanford physician-researcher, built MYCIN in the early 1970s as part of his PhD work. It was an expert system for diagnosing bacterial infections that incorporated about 600 rules captured from interviews with infectious-disease specialists.

MYCIN advised physicians on bacterial infections and antibiotic treatments. It used a knowledge base of rules and an inference engine, along with certainty factors to express imperfect confidence. It could also explain aspects of its reasoning, which made it unusually relevant to high-stakes decision support.

MYCIN performed approximately as well as human experts in controlled tests. It was never deployed clinically, partly because of liability concerns about who would be responsible if its recommendations harmed a patient. (Forty years later, this exact question still complicates clinical AI deployment.)

Expert systems became the dominant form of commercial AI in the 1980s.

The expert systems approach of encoding human expertise as explicit rules was eventually abandoned because it did not scale. Capturing knowledge from experts was expensive, the rules were brittle, and the systems could not handle situations their authors had not anticipated.

But the expert systems era left two important legacies. First, it established the commercial viability of AI, paving the way for later investment. Second, it taught the field, painfully, that explicit rules cannot capture the full subtlety of human expertise. This lesson directly motivated the shift to learned systems that now dominate AI.

1980: XCON and the First Big Commercial AI Payoff

Around 1980, Digital Equipment Corporation began using XCON, also known as R1, to configure VAX computer systems. John McDermott, a Carnegie Mellon AI researcher, was the central figure behind R1/XCON. The system translated customer orders into valid computer configurations, catching missing or inconsistent components before manufacturing and delivery.

XCON was the first AI system to achieve massive, undeniable commercial success, saving DEC an estimated $40 million a year. It succeeded where MYCIN failed because of its bounded context; the domain of DEC computer parts was finite, knowable, and heavily structured, preventing the catastrophic edge-case failures seen in broader systems.

It did not need to understand the bigger world. It needed to solve a painful business problem: configuring complex products correctly. This is one of the most important product lessons in AI history. Narrow, expensive, repetitive, expertise-heavy tasks are excellent AI targets.

XCON also demonstrated the limits of rule-based AI. As products changed, rules had to be updated. Maintenance became expensive. The system’s value was real, but the approach did not generalize easily. In modern terms, XCON was a successful vertical AI product with high operational burden.

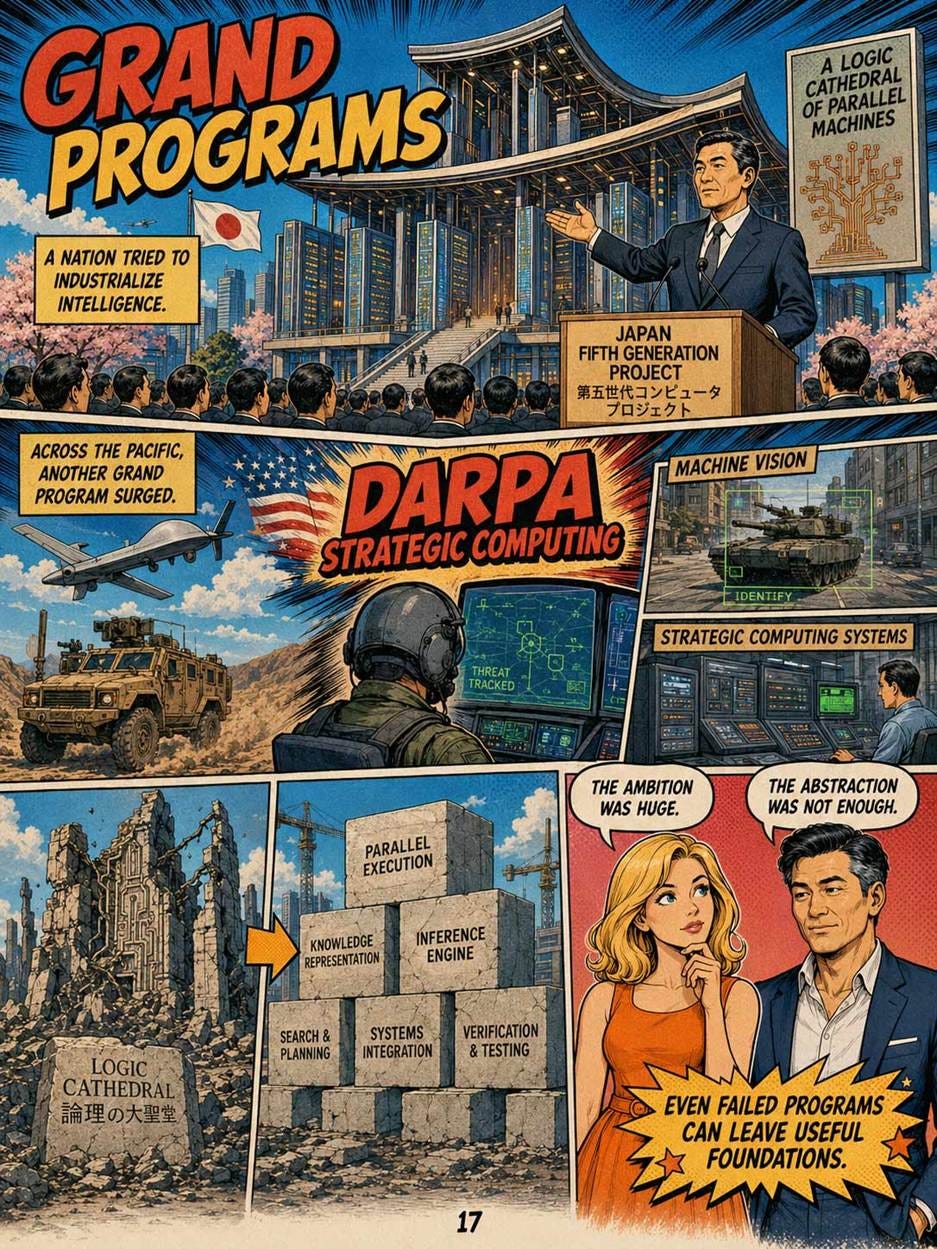

1982: Japan’s Fifth Generation Computer Project

In 1982, Japan’s Ministry of International Trade and Industry launched the Fifth Generation Computer Systems project. Kazuhiro Fuchi, a Japanese computer scientist associated with the Institute for New Generation Computer Technology, became one of the project’s prominent technical figures. The project aimed to build a new generation of knowledge-processing computers based on logic programming and parallel computing.

The Fifth Generation project was a major government-industrial initiative. It frightened competitors abroad because it suggested that Japan might leap ahead in AI, just as Japanese firms had become powerful in electronics and computing hardware. It also stimulated responses elsewhere, including American and European research initiatives.

As a commercial vision, the project disappointed. The project spent roughly $320 million over its lifetime and produced interesting research but no viable products. Logic programming did not become the universal route to intelligent machines. The systems were too brittle, too specialized, and too disconnected from the data-driven methods that later prevailed. But the project was not meaningless. It kept attention on AI, parallelism, and knowledge processing. It also showed that governments can mobilize research, but they can still bet on the wrong technical abstraction.

The Japanese project represents the grandest failure of Symbolic AI. Its reliance on predicate logic to process language was completely abandoned. The modern LLMs we use today achieved these exact conversational goals using the opposite methodology: unstructured data and probabilistic neural networks. However, Fuchi’s intuition about the necessity of massively parallel computing hardware was vindicated, as parallel computing became central to AI. But not mainly through logic-programming machines. It came through GPUs, tensor processors, and large-scale neural training. The hardware dream was right; the software paradigm was wrong.

1983: DARPA’s Strategic Computing Initiative

In 1983, DARPA launched the Strategic Computing Initiative. Robert Kahn, a computer scientist and DARPA leader best known for his role in internet architecture, helped shape the agency’s computing agenda; many program managers and contractors then pursued applications such as autonomous vehicles, pilot assistants, image understanding, and advanced architectures.

The initiative was ambitious. It aimed to push AI, computer architectures, and military applications together. The Autonomous Land Vehicle became one of its visible projects, linking computer vision, planning, and control.

The Strategic Computing Initiative did not produce general AI. But it advanced technologies that later became important in robotics, vision, and autonomous systems. Government-funded AI often works this way. The original headline goal may be overambitious, yet components such as sensors, algorithms, datasets, hardware, and trained researchers can seed later commercial markets.

For product strategists, the point is that platform technologies often mature indirectly. A failed grand program may still produce the substrate for later successes.

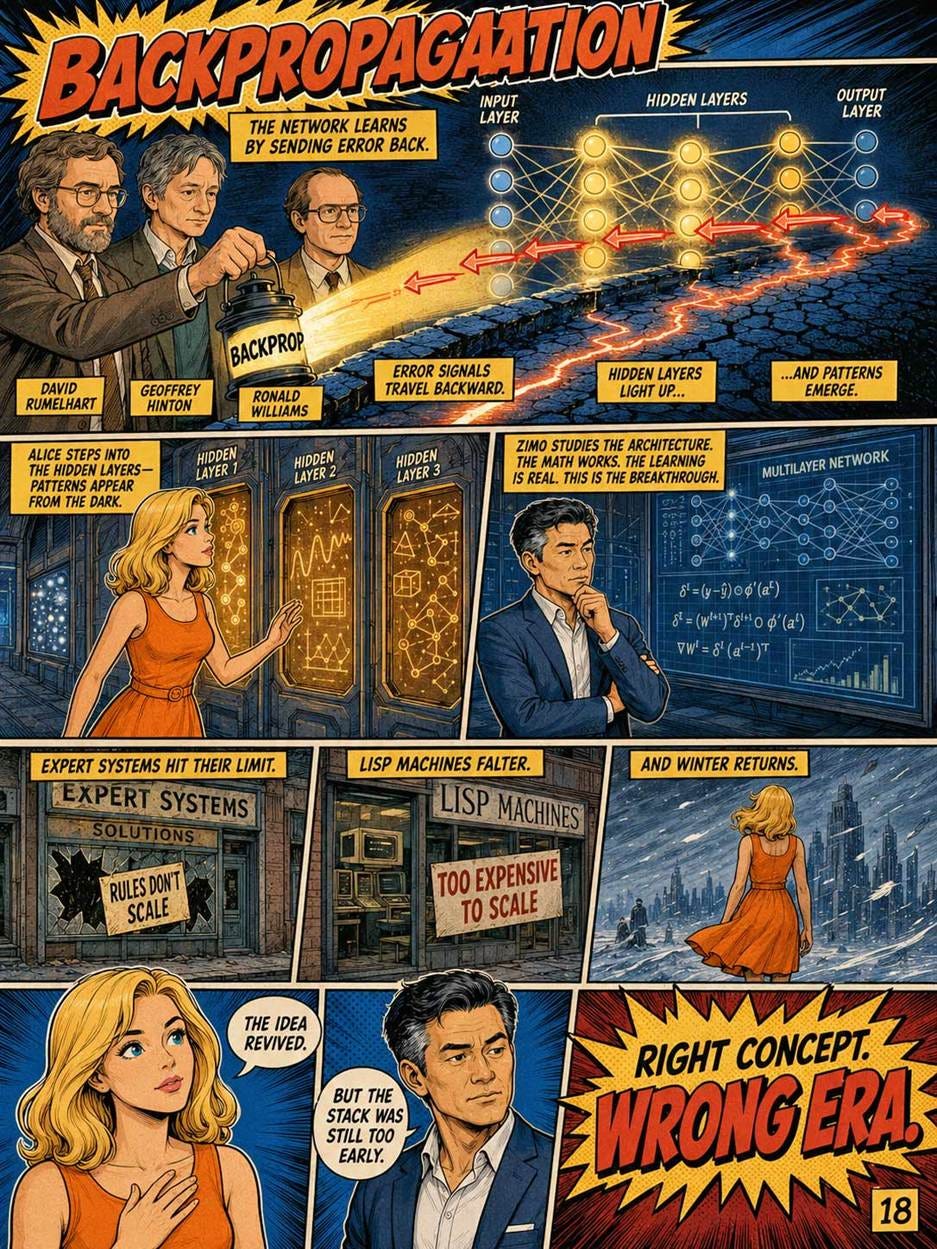

1986: Backpropagation Restores Neural Networks

The algorithm that would eventually bring neural networks back from the dead is called backpropagation: short for “backward propagation of errors.” In 1986, David Rumelhart, Geoffrey Hinton, and Ronald Williams published the modern backpropagation breakthrough for neural networks. Rumelhart was a cognitive psychologist central to the parallel distributed processing movement; Hinton was a cognitive scientist and computer scientist who became deep learning’s most persistent advocate; Williams was a computer scientist known for his work on learning algorithms.

Backpropagation solved the problem that had killed the perceptron: how do you train a neural network with multiple layers? The answer is that you compute the error at the output, then propagate that error backward through the network, adjusting each layer’s weights by the amount they contributed to the mistake. This is conceptually simple, but it was technically transformative. Suddenly, neural networks with hidden layers (between input and output) could be trained, and multi-layer networks could learn the complex patterns that single-layer perceptrons could not.

Modern AI depends on this milestone. The specific training systems used for GPT-style models are far more complex, but the principle of learning by adjusting weights through gradients remains foundational. Backpropagation is one of the few ideas in AI history that moved from academic breakthrough to industrial backbone.

This was one of the most important events in AI history. It allowed neural networks to learn internal representations, not just classify inputs directly. However, the 1980s still lacked enough data and compute for backpropagation to reach its full power. The idea was ready before the infrastructure was ready. There was a second AI winter in the late 1980s and early 1990s, driven largely by the collapse of the expert systems market and a broader disillusionment with AI’s promises.

Late 1980s–Early 1990s: Second AI Winter

Expert systems failed to live up to their commercial promise. Maintaining large rule bases proved more expensive than expected. The specialized “LISP machines” sold by Symbolics and Lisp Machines Inc. were rendered obsolete by general-purpose workstations from Sun Microsystems (where I worked) and others. Funding dried up again.

The second AI winter lasted, depending on how you count, from about 1987 to the late 1990s. AI as a term became unfashionable; researchers rebranded their work as “machine learning,” “data mining,” or “intelligent systems.”

This rebranding pattern has not gone away. Even in 2026, research labs whose funding sources are nervous about “AI” sometimes call their work “applied machine learning” or “computational systems.” Vocabulary follows fashion.

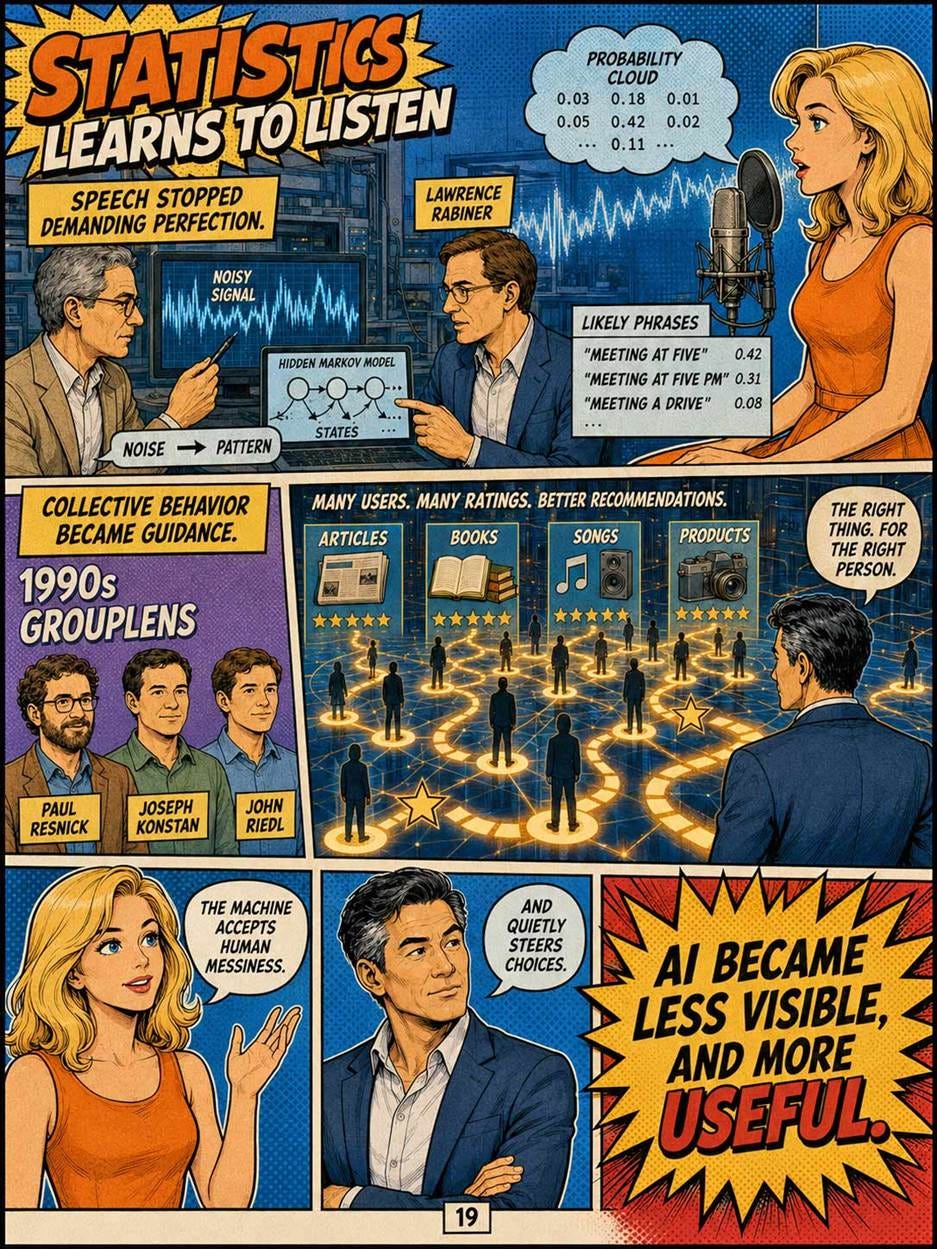

Late 1980s–1990s: Statistical Speech Recognition

Speech recognition moved from rule-heavy approaches toward statistical methods in the 1980s and 1990s. Frederick Jelinek, a Czech-American IBM researcher, was a leading advocate of statistical language modeling; Lawrence Rabiner, an electrical engineer, helped popularize hidden Markov models for speech recognition through influential tutorials and research.

The shift mattered because it changed the field’s attitude. Instead of trying to encode grammar and phonetics by hand, researchers used probabilistic models trained on data. Speech recognition improved because researchers accepted messiness and modeled likelihoods.

This was a preview of the larger AI transition from rules to data. Modern voice assistants, dictation systems, captioning tools, meeting transcription, and multimodal assistants all descend from this statistical turn. The exact methods have changed as deep learning replaced many hidden Markov pipelines, but the product premise survived: users benefit when machines can handle natural human input without requiring artificial command syntax.

1994–1995: Recommender Systems and Collaborative Filtering

In the mid-1990s, collaborative filtering emerged as one of AI’s most important practical uses. Paul Resnick, Joseph Konstan, and John Riedl were among the key researchers behind GroupLens, a system for filtering Netnews based on the opinions of other users. Resnick became a leading scholar of social computing; Konstan and Riedl helped define recommender systems as a product discipline.

Recommender systems mattered because they made AI invisible but economically central. They did not converse like humans. They did not solve logic puzzles. They helped users choose what to read, buy, watch, or ignore. This is AI as information scent and choice architecture.

Modern product teams sometimes over-focus on chatbots because chat is visible. But recommendation has been one of the highest-impact AI applications in commercial history. Search ranking, feeds, shopping suggestions, music discovery, video platforms, and marketplace personalization all depend on the same broad idea: use patterns in past behavior to reduce future choice overload.

For UX designers, recommenders are both powerful and dangerous. They improve discoverability, but they also shape attention. Their interface is not merely a list; it is a behavioral environment.

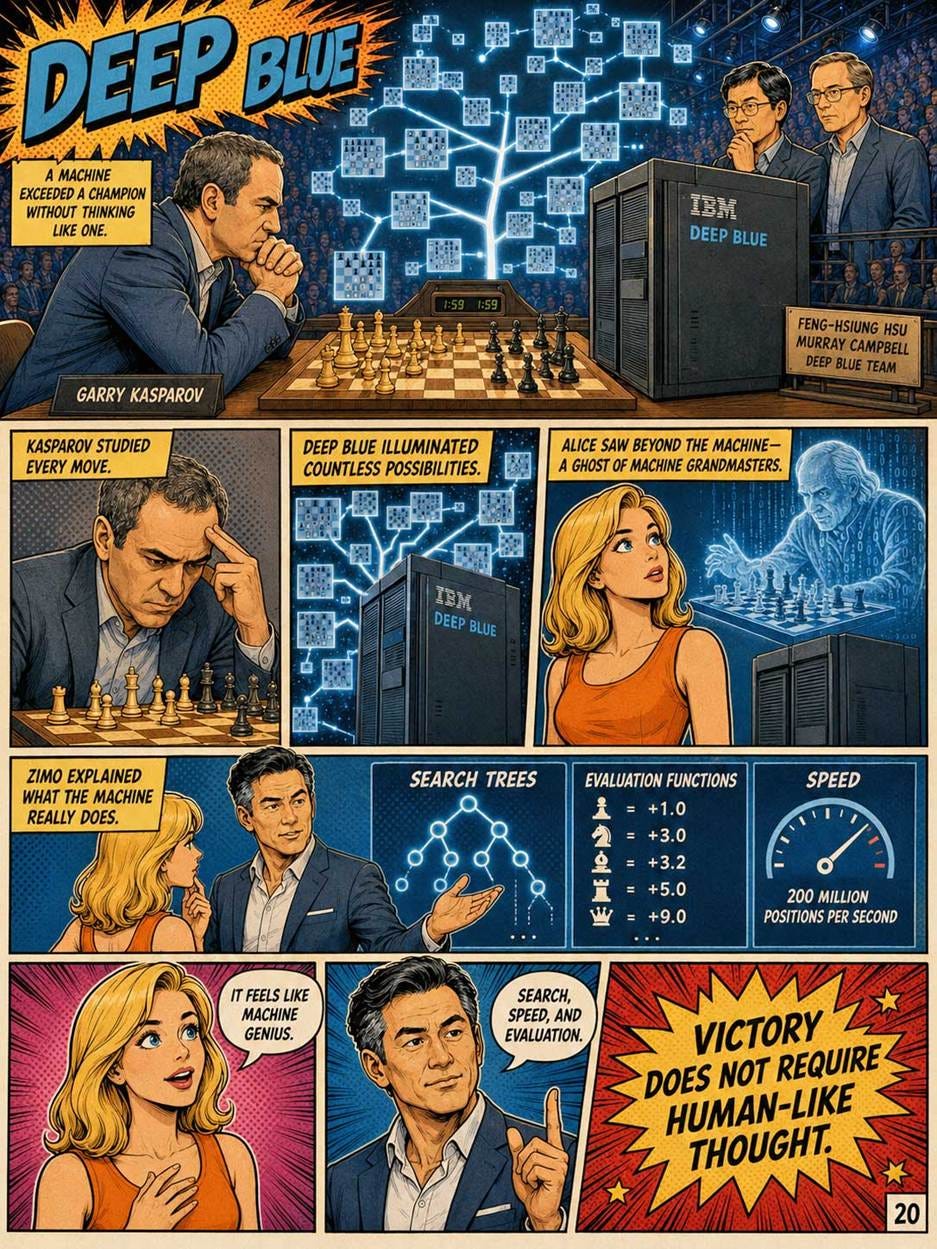

1997: Deep Blue Beats Kasparov

In 1997, IBM’s Deep Blue defeated world chess champion Garry Kasparov. Feng-hsiung Hsu, a computer scientist and chess-hardware specialist, was one of Deep Blue’s principal architects; Murray Campbell, an IBM researcher, was another central member of the team. Deep Blue used specialized hardware, search, evaluation functions, and vast chess knowledge to examine 200 millions of positions per second.

Deep Blue was culturally enormous. It showed the public that a machine could beat the best human in a domain long associated with intelligence. But it was not modern AI in the ChatGPT sense. It did not understand language. It did not learn broadly. It searched a well-defined game tree extremely effectively.

The milestone matters because it separated performance from humanlike process. A machine can exceed humans without thinking as humans do. This is still true. Modern AI systems often feel humanlike because they use language, but their internal process remains alien. Product design should judge outcomes, failure modes, and user fit rather than rely on anthropomorphic metaphors.

Modern chess engines are vastly stronger than Deep Blue, and they work very differently. AlphaZero, released by DeepMind in 2017, learned chess from scratch by playing itself, with no human-supplied chess knowledge. The shift from Deep Blue (hand-coded heuristics plus brute force) to AlphaZero (pure learning) parallels the broader shift in AI.

2006: Deep Learning’s Quiet Rebirth

Geoffrey Hinton and his collaborators published a series of papers starting in 2006 that demonstrated practical methods for training deep neural networks: networks with many layers. Hinton coined the term “deep learning” to rebrand neural networks after years of unfashionability.

This was a masterclass in product marketing and nomenclature. The term “neural network” carried the heavy baggage of past failures. “Deep Learning” sounded new, profound, and powerful, bypassing legacy biases and catalyzing a massive new wave of enterprise adoption and venture capital.

The “Deep” in Deep Learning simply means more hidden layers between the input and the output, a structure completely dominant today. The pre-training techniques Hinton pioneered were conceptual precursors to the massive unsupervised pre-training of today’s Foundation Models. Both the technical advance and the brilliant marketing term carried through to 2026.

The 2006 papers were not earth-shattering on their own. But they suggested that with enough compute and enough data, deep neural networks could achieve previously impossible results. Hinton, working at the University of Toronto, attracted graduate students who would go on to define the next decade of AI.

The 2006 work is the inflection point at which the long neural network winter began to thaw. Within six years, deep learning would dominate AI research. Within sixteen years, it would produce ChatGPT.

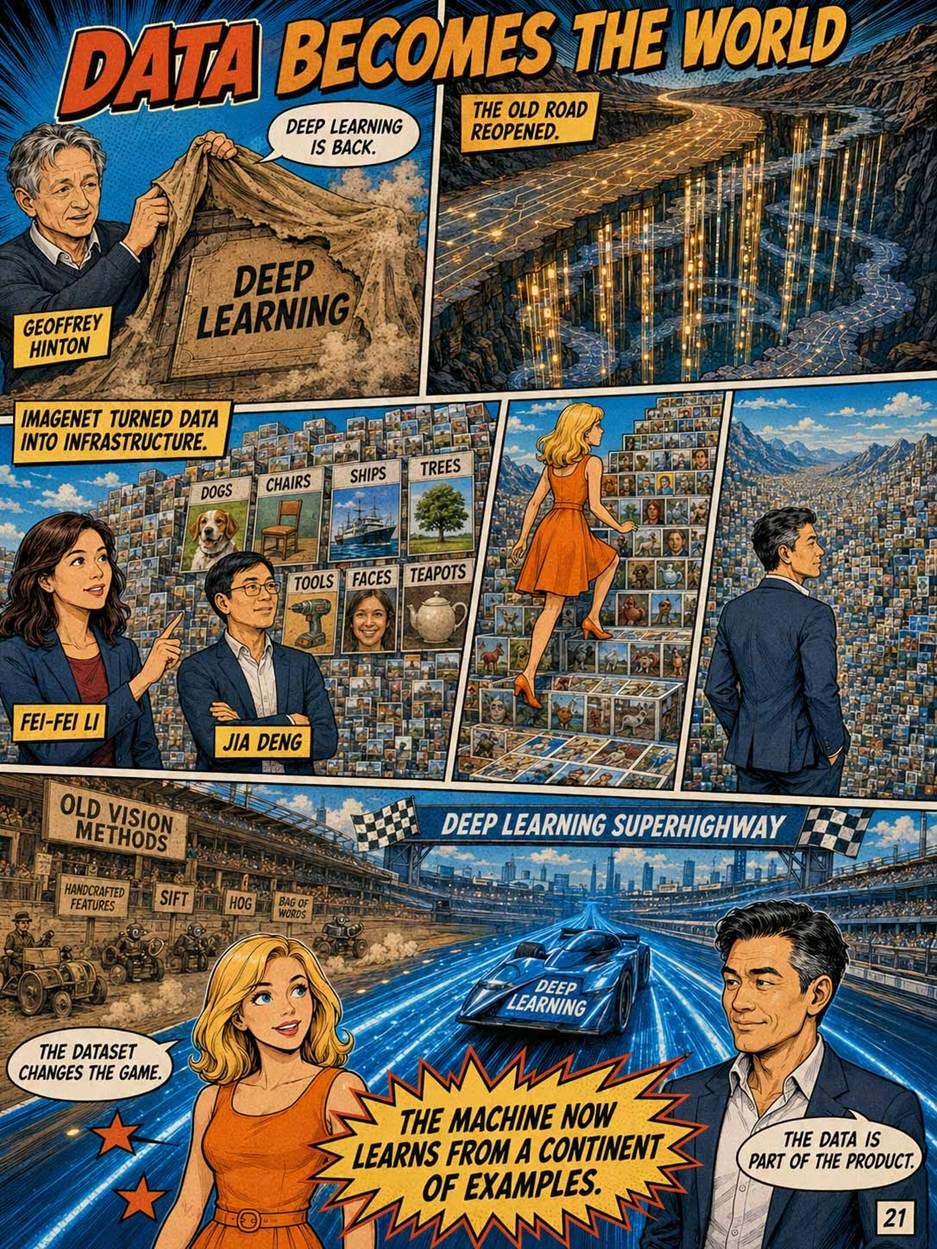

2009: ImageNet and the Data Revolution

In 2009, Fei-Fei Li and Jia Deng helped introduce ImageNet. Li is a computer scientist whose work helped define large-scale computer vision datasets; Deng was a doctoral researcher and later professor who played a key role in building and organizing ImageNet. The project aimed to populate WordNet categories with hundreds or thousands of full-resolution images per concept, creating a vast benchmark for visual recognition.

ImageNet mattered because AI needed not only better algorithms but better measurement and better data. It gave the computer-vision community a large shared target. Researchers could compare methods, compete, and improve quickly.

ImageNet fundamentally shifted the mental model of AI engineering from algorithmic craftsmanship to data logistics. It proved that a mediocre algorithm fed with massive amounts of excellent data will consistently outperform a brilliant algorithm fed with poor data.

For modern AI, ImageNet was a precursor to the foundation-model era. It showed that scale changes behavior. More data, better benchmarks, and public competition can turn a fragmented research field into a fast-moving engineering discipline. The same logic later applied to language corpora, code repositories, multimodal datasets, and human-preference data.

For product teams, ImageNet’s lesson is that the dataset is part of the product. What the system sees during training shapes what users experience at deployment.

2011: IBM Watson Wins Jeopardy!

In 2011, IBM Watson competed on the TV show Jeopardy! against top human champions. David Ferrucci, an IBM researcher, led the Watson project and framed it as a challenge in open-domain question answering over large collections of unstructured information.

Watson mattered because it brought natural-language AI back into public imagination. It combined retrieval, parsing, scoring, and many specialized components. It was not a single neural foundation model. It was a pipeline.

Watson’s later commercial story was mixed, especially in healthcare. The lesson is product-market fit. A system that wins a televised benchmark may still struggle in messy professional practice. Healthcare, law, finance, and enterprise operations require integration, trust, data governance, workflow fit, and liability management. Intelligence alone is not a product.

Modern LLMs outperform Watson-like systems in fluency and breadth, but many successful AI products still use Watson-like decomposition: retrieve evidence, rank candidates, call tools, and assemble an answer. The pipeline did not disappear. It was absorbed into larger AI architectures.

2011: Siri Launches

Apple released Siri as part of iOS 5 in October 2011, just one day before Steve Jobs’s death. Siri originated as a DARPA-funded project at SRI International (the “SRI” in Siri) and was acquired by Apple in 2010.

Siri was the first voice assistant integrated with a mainstream consumer product. Amazon’s Alexa followed in 2014, Google Assistant in 2016.

Siri also represents a cautionary tale. Despite being first, Siri stagnated for years. The underlying technology was a hand-built pipeline of components that could not improve quickly. By 2026, Apple is widely seen as having fallen behind in AI assistants, and Siri has been substantially rebuilt with modern language models.

The lesson: being first does not protect you from being lapped by competitors with better foundational technology. UI polish does not compensate for stagnant underlying capability.

2012: AlexNet and the Deep-Learning Breakthrough

In 2012, Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton won the ImageNet competition with a deep convolutional neural network now known as AlexNet. Krizhevsky was a University of Toronto doctoral student whose implementation became famous; Sutskever later became a major figure in deep learning and co-founded OpenAI; Hinton was the long-time neural-network advocate whose ideas finally met the right hardware and data.

AlexNet was important because it made deep learning undeniable. It used GPUs, large labeled data, and a deep neural architecture to outperform traditional computer-vision methods. The result changed research funding, hiring, corporate strategy, and product roadmaps.

This is the beginning of modern AI as an industrial force. Before AlexNet, deep learning was promising but contested. After AlexNet, every major technology company had to take neural networks seriously. The modern AI landscape of large multimodal systems, image generators, video models, and AI assistants depends on the same scaling pattern: data plus compute plus neural architecture plus engineering.

For UX designers, AlexNet’s consequence was delayed but profound. Image understanding enabled photo search, visual moderation, accessibility features, visual shopping, medical imaging support, autonomous perception, and eventually multimodal chat.

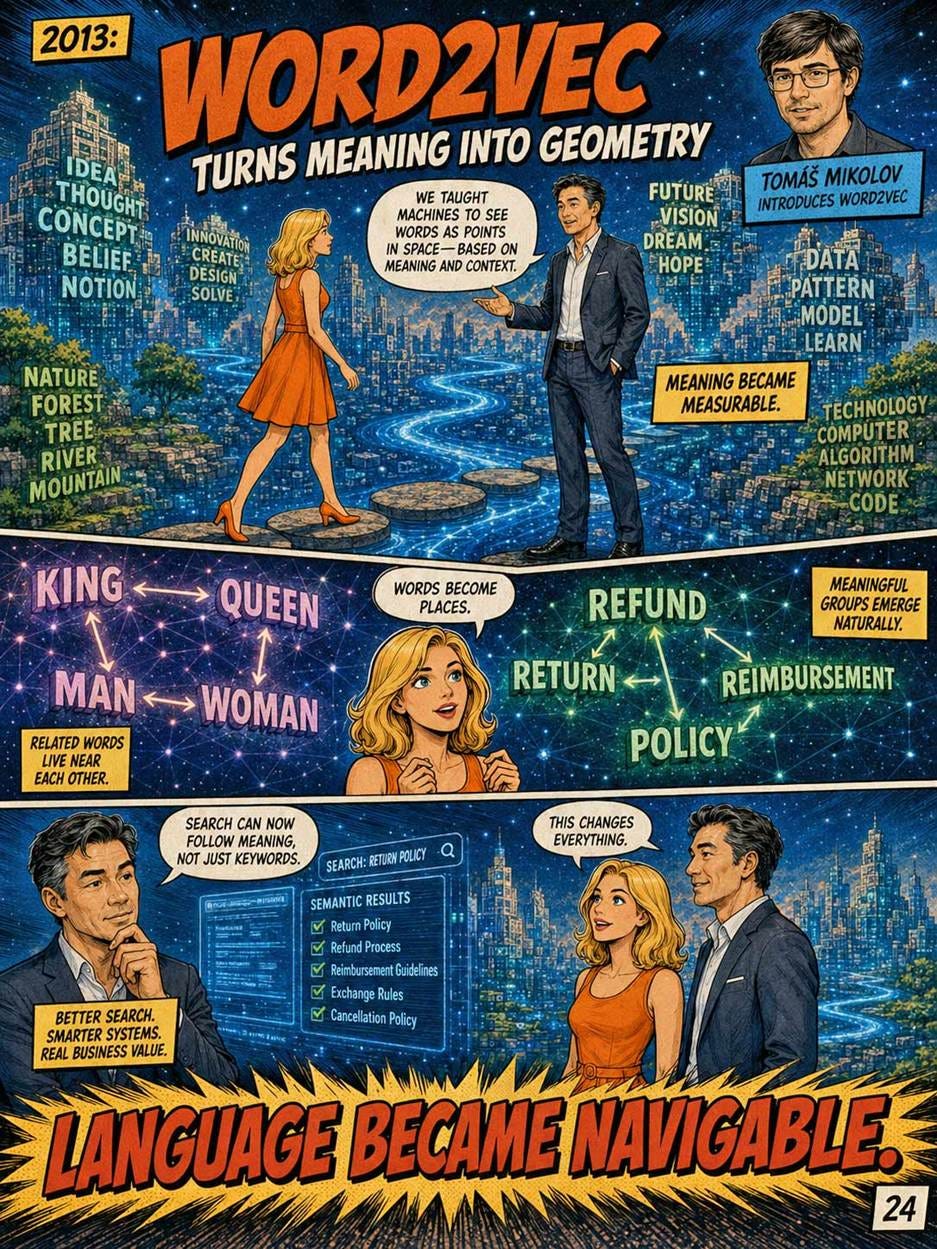

2013: Word2Vec and Meaning as Geometry

In 2013, Tomáš Mikolov and colleagues at Google introduced Word2Vec. Mikolov is a Czech computer scientist known for neural language representations; Jeff Dean, a Google engineer and computer-systems leader, helped create the infrastructure context in which large-scale neural methods could flourish.

Word2Vec learned word embeddings: numerical vectors that captured semantic and syntactic relationships from context. Words used in similar contexts ended up near each other in vector space. This made language computationally tractable in a new way.

The importance for modern AI is immense. Embeddings are now everywhere: search, recommendations, retrieval-augmented generation, clustering, semantic similarity, personalization, and memory systems. A large language model is not simply Word2Vec scaled up, but the idea that meaning can be represented geometrically is fundamental.

For product strategy, embeddings are one of the most useful AI concepts to understand. They allow products to match by meaning rather than exact keywords. That is why modern search can find “refund policy” when the document says “returns and reimbursement.” It is also why retrieval systems can feed relevant context into chatbots.

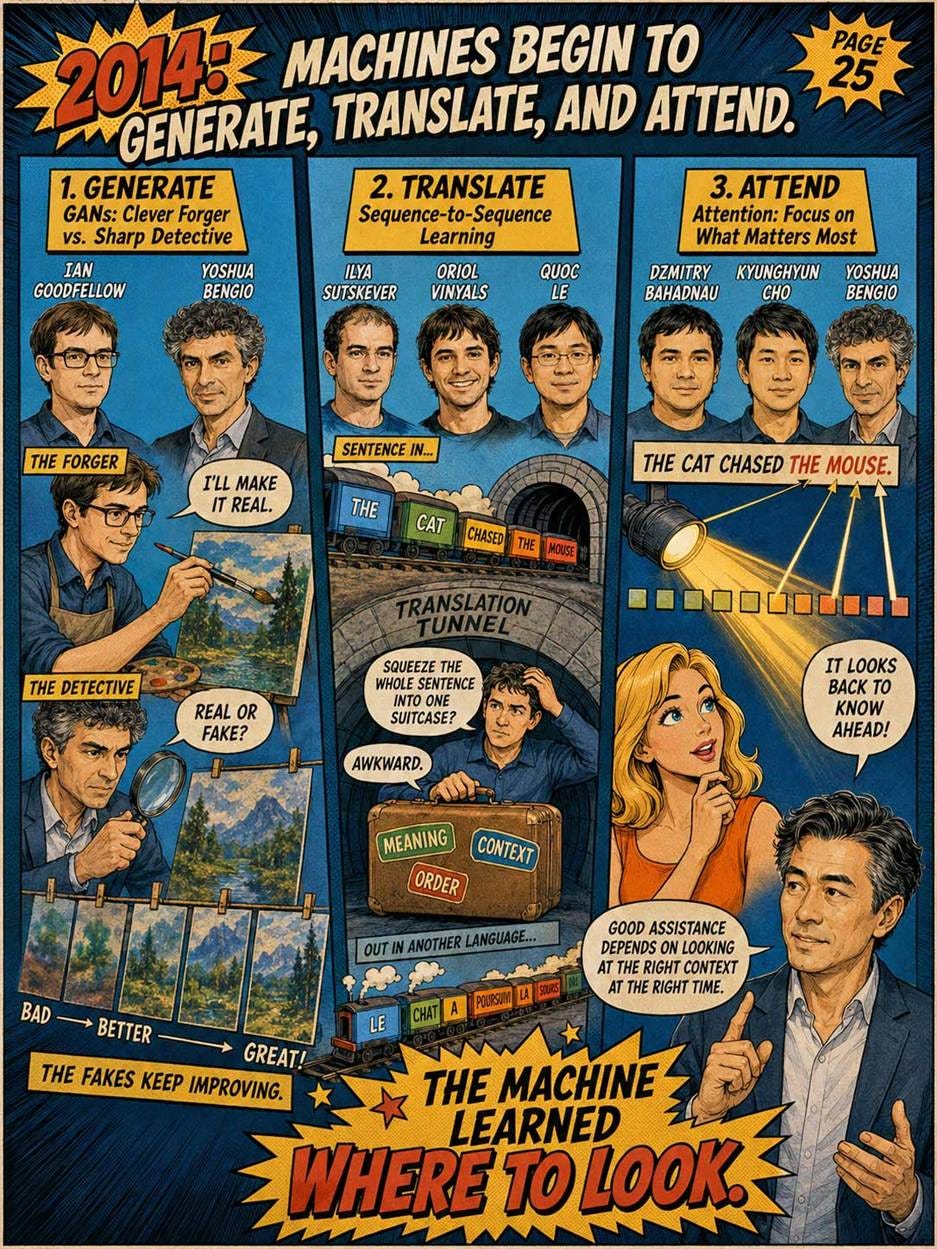

2014: GANs and Generative Imagination

In 2014, Ian Goodfellow introduced generative adversarial networks with Yoshua Bengio and other collaborators. Goodfellow is a machine-learning researcher known for generative models and adversarial examples; Bengio is a Canadian computer scientist and deep-learning pioneer.

A GAN has two parts: a generator that creates synthetic examples and a discriminator that judges whether they look real. The two improve through competition. This minimax game produced striking image-generation results and made generative AI a serious research area.

GANs were the first widely successful approach to generating realistic images. They produced the early generation of deepfakes and AI-generated art that dominated the late 2010s

GANs were eventually overtaken in many consumer text-to-image systems by diffusion models, which proved easier to scale and control. But GANs changed expectations. They showed that AI could create, not just classify.

This distinction matters for UX. Classification AI is usually invisible: detect fraud, rank results, tag images. Generative AI is visible and interactive: make me a logo, write a draft, create a storyboard, synthesize a video. GANs helped move AI from back-office automation toward creative collaboration.

2014: Sequence-to-Sequence Learning and Attention

In 2014, Ilya Sutskever, Oriol Vinyals, and Quoc Le showed that neural networks could translate sequences end-to-end using LSTMs. Sutskever is a deep-learning researcher who later became central to OpenAI; Vinyals is a machine-learning researcher known for sequence models and later work at DeepMind; Le is a Google researcher known for large-scale neural learning.

Also in 2014, Dzmitry Bahdanau, Kyunghyun Cho, and Yoshua Bengio introduced an attention mechanism for neural machine translation. Bahdanau and Cho were researchers in neural translation; Bengio was already a leading deep-learning figure. Their model learned to focus on relevant parts of the source sentence while generating each target word.

Attention was one of the decisive ideas in AI history. It made sequence models less dependent on compressing an entire input into one fixed representation. The model could look back at relevant parts. This later became the heart of transformers.

The product meaning is intuitive: attention lets a system use context selectively. That is what users expect from an assistant. When asked a question about a paragraph, a document, or a conversation, the system should attend to the relevant part rather than treat everything equally.

2016: AlphaGo Beats Lee Sedol

DeepMind, a London-based AI company acquired by Google in 2014, built AlphaGo. Led by Demis Hassabis (a former chess prodigy and neuroscientist) and David Silver, AlphaGo defeated Lee Sedol, one of the world’s top Go players, 4-1 in March 2016.

Go had been considered far harder for computers than chess because the search space is vastly larger and good positions are harder to evaluate. Most experts had believed competitive computer Go was at least a decade away.

AlphaGo combined deep learning (learned position evaluation) with tree search (looking ahead). It learned partly from human games and partly by playing itself.

The 2017 successor, AlphaGo Zero, learned entirely from self-play with no human data and was substantially stronger than the original AlphaGo. This was an even more profound result: it suggested that human knowledge was not just unnecessary for top performance but in some cases an active limitation.

AlphaGo also broke through in mainstream cultural awareness in East Asia in a way that previous AI achievements had not. The Lee Sedol match was watched by approximately 200 million people.

2017: The Transformer

In 2017, Ashish Vaswani and his coauthors published the most famous of all AI papers, “Attention Is All You Need.” Vaswani, then at Google, was one of the lead authors; Noam Shazeer, another major contributor, was already known for large-scale neural language work. The paper introduced the transformer, an architecture based on attention rather than recurrence or convolution. It was more parallelizable and faster to train at scale.

This is the central technical milestone behind modern generative AI. Transformers made it practical to train very large models on enormous corpora. They handled context better, scaled better, and generalized across language, code, images, audio, and video when adapted to those modalities.

The paper’s title turned out to be remarkably literal: attention really was all you needed. Within five years, transformers would dominate not only language tasks but also image and video generation.

The transformer turned language modeling from a specialized research task into a general platform. GPT, BERT, Gemini, Claude, Llama, and many multimodal systems are transformer descendants. When people in 2026 use a mainstream AI model, they are using a product lineage that runs directly through this 2017 paper.

The UX consequence was not immediate, but it was decisive. Once language became a scalable interface to computation, many software interactions could be redesigned around intent rather than command syntax.

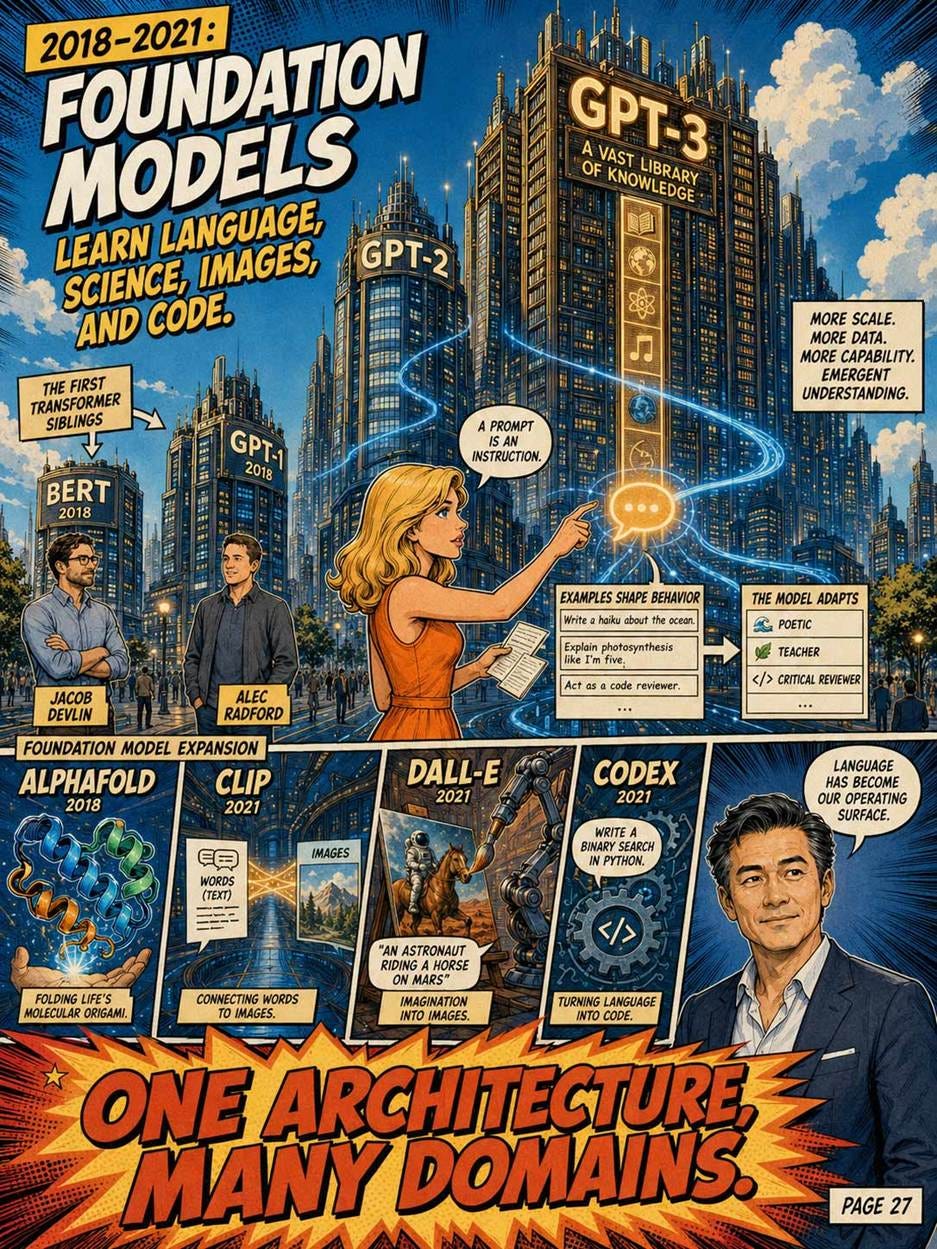

2018: BERT and GPT-1

In 2018, two related but different approaches emerged. Google released BERT (Bidirectional Encoder Representations from Transformers), led by Jacob Devlin. OpenAI released GPT-1 (Generative Pre-trained Transformer), led by Alec Radford.

Both used transformers. Both used the strategy of pre-training on enormous text corpora and then fine-tuning for specific tasks. BERT was bidirectional, designed primarily for understanding tasks. GPT was unidirectional, designed for generation.

GPT-1 was modest by later standards: 117 million parameters. But the approach scaled. GPT-2 (2019) had 1.5 billion parameters; GPT-3 (2020) had 175 billion.

OpenAI’s bet on scaling to keep making the model bigger, the data larger, and the compute greater was contrarian in 2018. Most researchers thought clever architectures or training tricks mattered more than raw scale. OpenAI was right; the scaling hypothesis defines modern AI economics.

2020: GPT-3

OpenAI released GPT-3 in May 2020. With 175 billion parameters, it was 100 times larger than GPT-2. It demonstrated capabilities that surprised even its creators: it could write coherent essays, translate languages, generate code, and perform tasks it had never been explicitly trained on.

GPT-3 was the first AI system that gave many serious researchers the unsettling feeling that something new was happening. The system did things that arguably required some form of reasoning, even though it was just predicting the next word.

OpenAI initially restricted GPT-3 access to researchers and selected developers. The general public would not have direct access until ChatGPT.

GPT-3’s most important contribution was probably not the model itself but the demonstration of “in-context learning,” the ability of a sufficiently large language model to perform new tasks just by being shown examples in its prompt. This is the foundation of prompt engineering as a discipline, and it is why modern AI products feel less like traditional software and more like collaborating with a strange but capable assistant.

2020–2021: AlphaFold and AI for Science

In 2020, DeepMind’s AlphaFold achieved a major breakthrough in protein structure prediction, later described in a 2021 Nature paper. John Jumper, a physicist and machine-learning researcher, led much of AlphaFold’s technical work; Demis Hassabis led DeepMind as the organization pursuing the project. AlphaFold used neural architectures and biological constraints to predict protein structures with striking accuracy.

AlphaFold matters in a different way from ChatGPT. It is not a general consumer interface. It is AI as scientific infrastructure. It showed that deep learning could solve a long-standing domain problem with practical implications for biology and medicine.

For product strategists, AlphaFold demonstrates that foundation-style methods are not only for content generation. The deeper pattern is representation learning at scale. When a model can learn the structure of a domain, it can become a platform for discovery.

2021: CLIP, DALL-E, and Multimodal AI

In 2021, OpenAI introduced CLIP and DALL-E. Alec Radford was a lead figure behind CLIP, which learned visual concepts from natural-language supervision; Aditya Ramesh was a leading author on DALL-E, which generated images from text prompts. CLIP connected images and text in a shared representational space, enabling zero-shot visual recognition. DALL-E used a transformer to generate images from text-image representations.

This was one of the beginnings of modern multimodal AI. Users could refer to visual concepts in ordinary language. The system could connect text instructions to images. Later tools such as DALL-E 2, Midjourney, Stable Diffusion, Nano Banana Pro, and video-generation systems inherited this broad direction: language became a control surface for media generation. OpenAI described DALL-E 2 in 2022 as creating realistic images and art from natural-language descriptions.

The product significance is large. Before multimodal generative AI, many creative tools required users to manipulate technical controls: layers, masks, timelines, curves, parameters. After text-to-image and text-to-video systems, users could begin with intent. The interface moved closer to briefing an assistant than operating a tool palette.

This does not eliminate craft. It changes where craft lives. Designers increasingly curate, prompt, edit, constrain, evaluate, and integrate machine output rather than produce every pixel manually.

2021: Codex and AI for Programming

OpenAI Codex showed that language models could write code. Mark Chen, an OpenAI researcher, was among the key authors; the Codex paper described a GPT model fine-tuned on public GitHub code and noted that descendants powered GitHub Copilot. Codex performed far better than GPT-3 on programming benchmarks such as HumanEval.

Codex mattered because programming is both language and action. Code is text that does things. A model that can generate code is therefore closer to a tool-using agent than a mere writer.

For product teams, coding assistants foreshadowed a broader change: AI would not only answer questions; it would operate software systems. Now, this direction continues in agentic workflows, where models write code, call APIs, inspect outputs, revise plans, and manipulate digital environments. The old dream of the Advice Taker returns, but with neural language models rather than hand-coded symbolic logic.

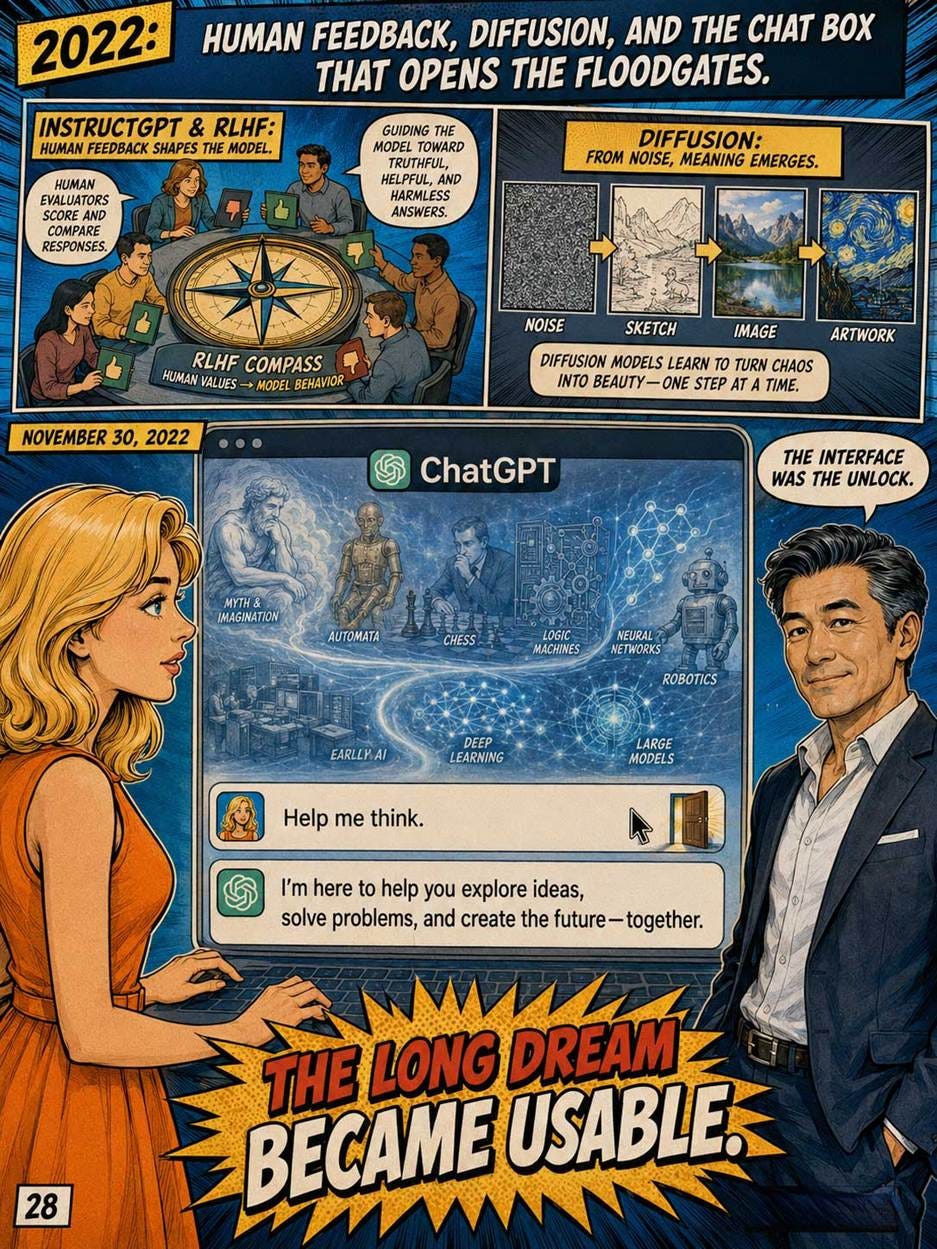

2022: InstructGPT and Human Feedback

In early 2022, OpenAI published work on InstructGPT. Long Ouyang, Jeff Wu, and colleagues showed how reinforcement learning from human feedback could make language models better follow user instructions. Jan Leike and Paul Christiano were among the researchers associated with the broader RLHF direction. The key result was striking: a smaller InstructGPT model could be preferred by human evaluators over a much larger base GPT-3 model.

This milestone is especially important for UX. A base language model predicts text. A useful assistant follows intent. That is not the same objective. Human feedback helped shift the model from raw continuation toward helpful response behavior.

InstructGPT was not just a safety or alignment technique. It was a product-quality technique. It reduced the gap between what users meant and what the model produced. The model became more instructable, more conversational, and more useful.

This is one of the clearest moments where UX and AI research meet. The “user” entered the training loop. Human preference became part of model behavior. In modern AI products, this idea continues through preference tuning, model routing, tool feedback, red-teaming, evaluation suites, and product telemetry.

2022: Diffusion Models and the Democratization of Image Generation

In 2022, latent diffusion models and systems such as Stable Diffusion made image generation widely accessible. Robin Rombach and Björn Ommer were leading figures behind latent diffusion research. Their approach moved diffusion into a compressed latent space, reducing computational cost while enabling powerful text-conditioned image generation.

Diffusion models gradually overtook GANs in many text-to-image applications because they were stable, scalable, and controllable. They converted noise into images through learned denoising steps, guided by text or other conditions.

For UX and product strategy, diffusion models changed the economics of visual ideation. Concept art, mood boards, thumbnails, advertising comps, storyboards, interface illustrations, and product imagery could be generated in seconds. The limiting factor shifted from manual production to taste, direction, selection, and integration.

The connection to current tools is direct. Image models such as Nano Banana and video-generation systems such as Seedance sit downstream from the generative-media revolution that diffusion helped popularize, even though modern systems increasingly mix diffusion, transformers, multimodal encoders, and proprietary control methods.

November 30, 2022: ChatGPT

On November 30, 2022, OpenAI launched ChatGPT as a research preview. It was based on the GPT-3.5 series and trained with methods related to InstructGPT. OpenAI described the conversational format as allowing follow-up questions, corrections, and dialogue.

This was the point at which eighty years of AI history became a mainstream user experience. ChatGPT was not the first chatbot. It was not the first language model. It was not the first neural network, not the first expert system, not the first machine-learning product, and not the first generative AI system. Its breakthrough was the combination.

It had enough language ability from transformer scaling. It had enough instruction-following behavior from human feedback. It had enough breadth from pretraining. It had enough usability from a simple chat interface. It was accessible in a browser. It required no API key, no machine-learning knowledge, no workflow integration, and no specialized training. The user could simply type.

This is why ChatGPT mattered so much. It collapsed the adoption barrier. Earlier AI often required users to adapt to the machine: write rules, label data, choose commands, configure models, or understand a narrow interface. ChatGPT adapted to the user’s language. That made AI feel less like software and more like a collaborator.

ChatGPT itself was technically a modest update to existing technology. What made it transformative was the conversational interface and the openness of access. OpenAI made it free and easy to try. No API key required, no specialized knowledge, no technical setup; just a chat box.

This is the most important UX lesson in this entire 80-year history. The underlying technology had existed for at least two years before November 2022. What had been missing was a usable interface that ordinary people could engage with. Once that interface existed, AI’s commercial relevance changed permanently. ChatGPT turned AI into a general-purpose interaction pattern.

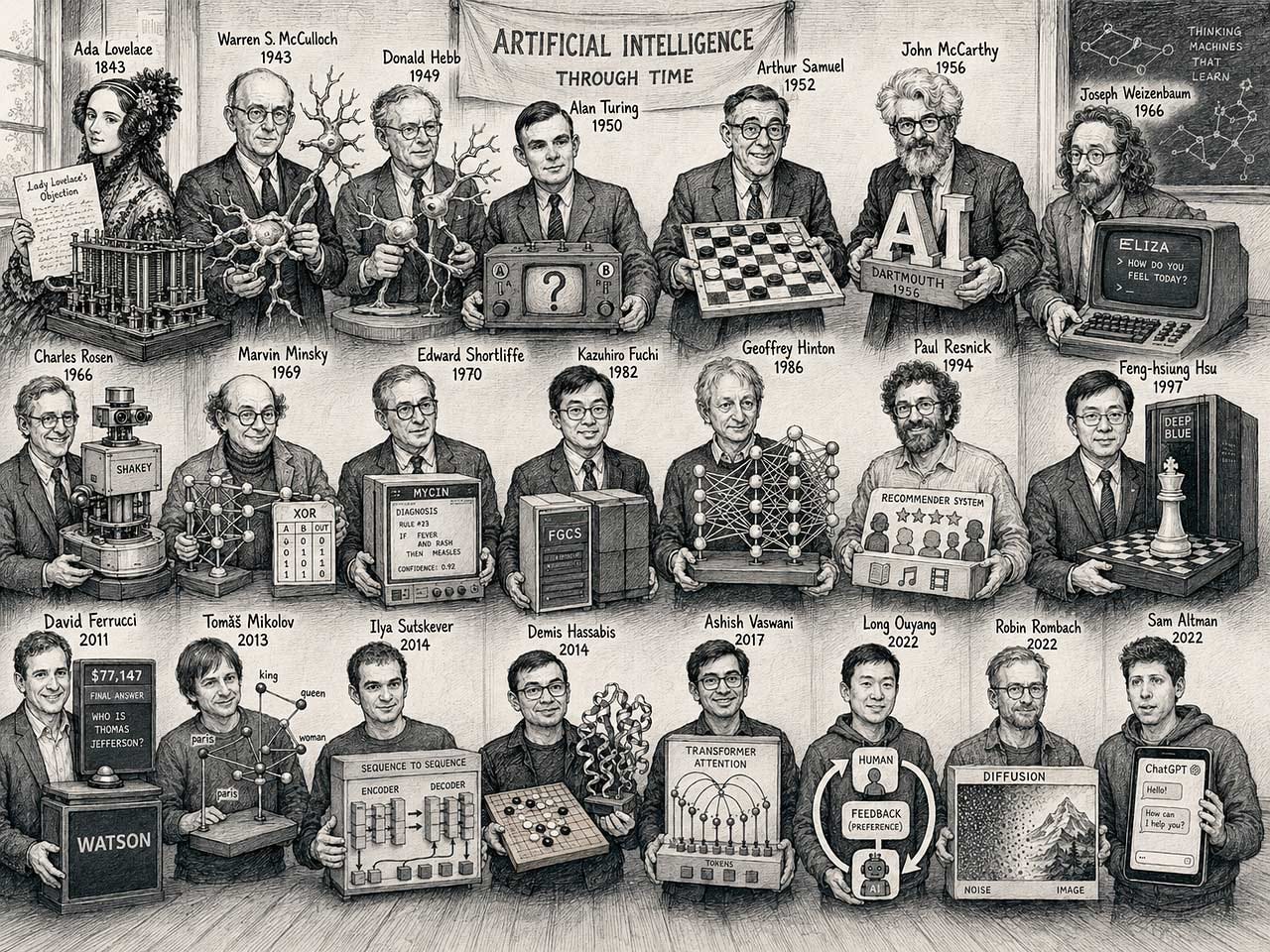

This run-through of AI history mentioned many pioneers, but there were more. Here, I tried to envision what it might look like if the most important AI pioneers were brought together in a workshop by a time machine. Wouldn’t that be something to attend!

The Themes of AI History

Across these eighty years, AI alternated between two large traditions.

The first tradition was explicit knowledge: logic, rules, symbolic reasoning, expert systems, planning, and structured representation. It was attractive because it was legible. Designers and engineers could inspect the rules. Experts could debate them. Explanations were possible. But the method was brittle. The world is too varied to be fully hand-coded.

The second tradition was learning from data: neural networks, statistical speech recognition, support vector machines, recommenders, embeddings, deep learning, transformers, and foundation models. This tradition was powerful because it scaled with examples. It did not require every rule to be written by hand. But it created new problems: opacity, bias, hallucination, evaluation difficulty, and user over-trust.

Modern AI products combine these traditions. Today, an assistant may use a transformer model to interpret a request, embeddings to retrieve relevant documents, symbolic tools to call APIs, business rules to enforce constraints, reinforcement learning or preference tuning to improve behavior, and human-centered interface design to help users understand what happened. The old debate between symbolic AI and neural AI is less useful than asking which parts of the product need flexibility and which parts need guarantees.

Theme 1: The Hype–Disillusionment Pendulum

AI has repeatedly swung between extravagant confidence and punitive disappointment. A new result creates the impression that intelligence is nearly solved. Money arrives. Researchers, vendors, governments, and journalists extrapolate too far. Then the systems fail outside their narrow test conditions, customers discover maintenance costs, and funding becomes scarce. The “AI winter” is not just a funding event; it is a trust event.

The current boom has more substance than earlier ones because AI is already embedded in work, education, software development, media creation, and consumer behavior. Stanford’s 2026 AI Index says organizational AI adoption reached 88%, generative AI reached 53% population adoption within three years, and global corporate AI investment hit $581.7 billion in 2025. Yet the old danger remains: when investment outruns product value, disappointment does not require technical failure. It only requires inadequate ROI.

If this reasserts in the coming decade, the likely outcome is not a total AI winter, because too many useful systems now exist. Instead, we may see a segmented winter. Generic AI wrappers, undifferentiated copilots, and speculative agent companies would freeze first. Infrastructure providers, deeply integrated workflow products, medical documentation tools, coding systems, design tools, and enterprise platforms with measurable productivity gains would survive. The cold would not kill AI; it would kill lazy AI product strategy.

Theme 2: The Anthropomorphic Trap

Humans have a cognitive bias to project empathy, consciousness, and infallible intent onto any system that mimics human conversation or biological traits. It is the most dangerous mental model mismatch in human–computer interaction, as users inevitably trust the system far more than the underlying mathematics justify.

The seeds were irresponsibly planted in 1943 when McCulloch and Pitts named their math “neurons.” Turing enshrined deception as a behavioral benchmark in 1950. But Joseph Weizenbaum’s ELIZA (1966) definitively proved the trap: users poured out their deepest emotional traumas to a dumb keyword-substitution script simply because it used conversational pronouns. Garry Kasparov fell for it in 1997, attributing “alien intuition” to Deep Blue’s sheer brute-force math.

With the rise of hyper-realistic generative spatial video (Seedance) and flawless, emotionally modulated voice agents, this trap threatens to become stronger. If unchecked, users will form parasocial dependencies, surrendering life-or-death medical, financial, or emotional decisions to software under the delusion of fiduciary empathy. If this crisis reasserts itself, we may need “De-anthropomorphizing Design.” We will have to deliberately degrade the user experience, injecting synthetic friction such as forced robotic vocal tones, persistent system-status watermarks, and jarring character breaks to constantly remind the user they are operating a tool, not confiding in a friend.

Theme 3: The Bitter Lesson

Rich Sutton named it in 2019, but the pattern has run through the entire 80 years: general methods that scale with computation reliably beat methods that rely on human knowledge or clever engineering. Compute and data win; cleverness loses.

Hand-coded chess programs lost to brute-force search (Deep Blue). Brute-force search lost to learned evaluation (AlphaZero). Rule-based machine translation lost to statistical methods, which lost to neural methods, which lost to transformers. Hand-engineered computer vision pipelines lost to convolutional networks trained on millions of images. Carefully crafted dialog systems lost to language models trained on most of the internet. Each time, the people who had spent years building elegant, knowledge-intensive systems were displaced by people who had simply trained a larger model on more data. The expert systems of the 1980s embodied the opposite philosophy and failed precisely because human-encoded rules cannot scale.

The bitter lesson reasserting itself in the coming decade has clear implications. AI startups attempting to build “small but smart” models with clever architectures will mostly lose to those integrating with frontier models from OpenAI, Anthropic, Google, and their Chinese counterparts. Domain-specific expertise embedded in code will lose to general models with appropriate prompting and retrieval. Product strategy advice: do not build what the frontier labs will build for you in eighteen months. Build the layer above, the workflow, the integration, and the human interface, where the bitter lesson does not apply because humans are still in the loop. The exception is if scaling itself genuinely stops paying off, in which case the pattern inverts and we are back to the phoenix theme above.

Theme 4: “The Infrastructure Bottleneck”: Good Ideas Wait Decades for Hardware and Data to Catch Up

Many of the most important ideas in AI were conceived long before they became practical. The gap between theoretical insight and practical impact is one of the most consistent, and most underappreciated, features of the field.

Backpropagation is the textbook example. The mathematical foundations were described by Paul Werbos in his 1974 PhD thesis. Rumelhart, Hinton, and Williams published their influential version in 1986. But neural networks did not dominate practical AI until the 2010s, when three infrastructure prerequisites finally converged: GPUs provided the parallel computing power needed to train large networks (Nvidia’s CUDA platform, released in 2007, was a critical enabler); the internet generated the enormous datasets required for training (ImageNet, Common Crawl, Wikipedia); and cloud computing made it economically feasible for researchers without supercomputer budgets to train large models. AlexNet was trained on two consumer-grade Nvidia GPUs. Without those GPUs, the same neural network architecture would have taken months to train instead of days, and the AlexNet result would never have happened.

The Transformer tells a similar story. Self-attention mechanisms existed before 2017, but the Attention Is All You Need paper showed that they could replace recurrence entirely and, crucially, that the resulting architecture was far more parallelizable, enabling it to take full advantage of GPU clusters. The Transformer did not just propose a new idea; it proposed an idea that was perfectly matched to the available hardware. If GPUs had not existed, the Transformer would have been an interesting theory paper, not the foundation of a trillion-dollar industry.

Even the original McCulloch-Pitts neuron had to wait. In 1943, there were no programmable digital computers capable of simulating large networks of artificial neurons. The idea was correct, but the infrastructure to realize it would not exist for decades.

This problem is already reasserting itself, and the current bottleneck is visible to everyone: energy and chip fabrication. Current large language models require enormous amounts of electricity to train and to run. If energy costs rise, if chip supply remains constrained, or if governments regulate data center construction, the scaling curve that has driven AI progress since 2012 will flatten.

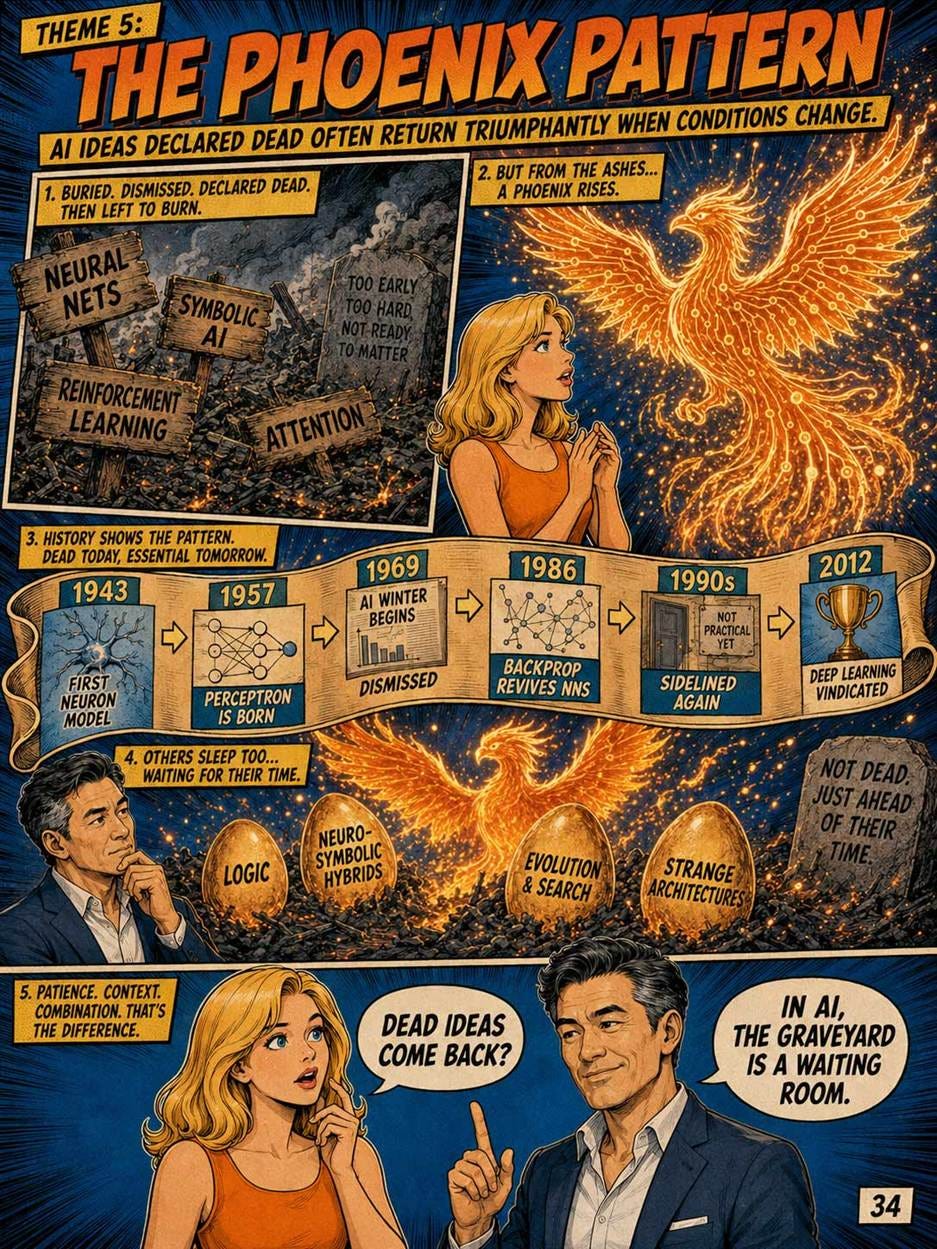

Theme 5: The Phoenix Pattern

Approaches confidently declared dead in AI have a habit of returning triumphantly decades later, once underlying conditions change. Almost every successful idea in current AI was once dismissed.

Neural networks are the canonical example. McCulloch and Pitts proposed them in 1943. Rosenblatt built the first one in 1957. Minsky and Papert killed the field with their 1969 book. Backpropagation revived them in 1986. Support vector machines pushed them aside in the 1990s. AlexNet vindicated them definitively in 2012. Today they power everything. Convolutional networks waited from 1998 (LeNet) until roughly 2012 for their moment. Reinforcement learning had multiple false starts before AlphaGo. Even the transformer’s “attention” mechanism had antecedents in earlier work that nobody had managed to make practical at scale.

The phoenix pattern almost certainly applies to currently-dismissed approaches in the coming decade. Symbolic AI, formal logic programming, neuro-symbolic hybrids, evolutionary methods, and various exotic architectures are presently considered dead ends. If pure transformer scaling hits a wall, maybe running out of training data, hitting compute limits, or failing to deliver promised reasoning, the field will rummage through the discarded toolbox. Some “obsolete” 1980s approach will be combined with modern compute and modern data, and the result will appear miraculous. The career advice: do not assume that what is currently fashionable will remain so, and do not write off researchers working on unfashionable approaches. They are statistically likely to win Turing Awards in twenty years.

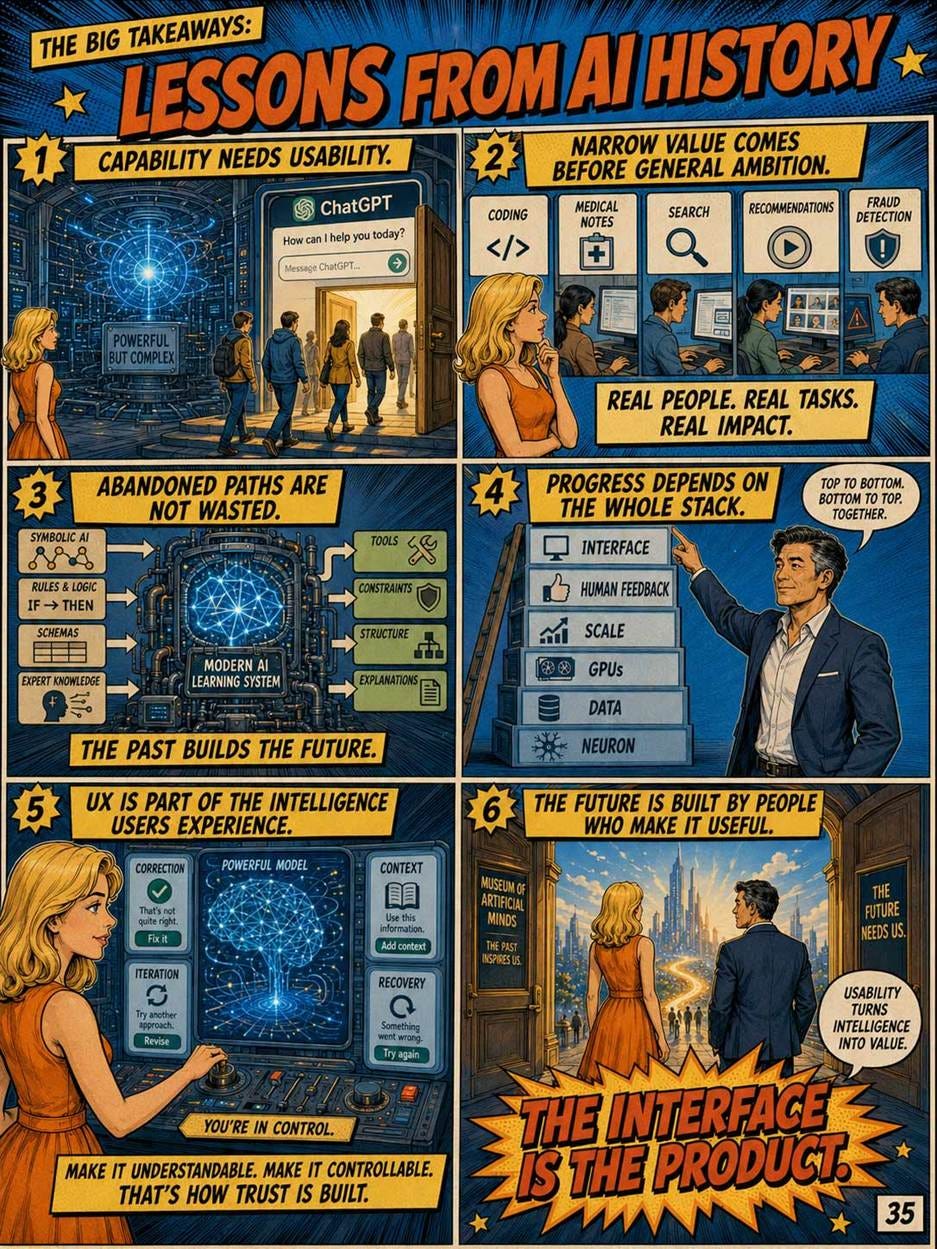

Lessons from AI History

The first lesson is that capability alone does not create adoption. Many old systems were technically impressive and commercially weak. ChatGPT became famous because its capability arrived in a form ordinary people could try immediately. UX converted research into behavior.

The second lesson is that narrow value usually precedes general ambition. MYCIN, XCON, postal OCR, speech recognition, recommenders, fraud models, and coding assistants all succeeded by solving concrete problems. Even foundation models become more valuable when packaged into specific workflows.

The third lesson is that the abandoned paths are not wasted. Symbolic AI failed as a complete theory of intelligence, but it lives on in tool use, rules, schemas, and planning. Expert systems failed as universal knowledge machines, but they taught us the value of explanations and domain expertise. The Fifth Generation project failed commercially, but its interest in parallel computing anticipated the hardware reality of modern AI. ELIZA was shallow, but it remains the canonical warning about conversational illusion.

The fourth lesson is that AI progress depends on the whole stack. McCulloch and Pitts had the neuron idea but no training infrastructure. Rosenblatt had learning but shallow networks. Backpropagation had the algorithm but insufficient compute and data. ImageNet had the dataset. AlexNet had GPUs. Transformers had scalable architecture. GPT-3 had scale. InstructGPT had human feedback. ChatGPT had the interface.

The fifth lesson is that UX is not cosmetic. It is part of the intelligence users experience. A model that is powerful but hard to steer is less useful than a slightly weaker model that supports correction, iteration, context, and recovery. The history of AI is partly the history of making machines smarter. But it is equally the history of making machine intelligence usable.

The story of AI has been a dance between human and machine, where only those AI advances that worked for people have survived.

By 2022, the field had traveled from an artificial neuron on paper to a conversational system used by millions. By 2026, the descendants of that system are multimodal, tool-using, long-context, image-generating, video-generating, code-writing, and increasingly agentic. Yet the central question remains the one that has always mattered for products: what can the system do for the user, how reliably can it do it, and how well does the interface help the user understand and control the result?

That is the real 80-year history of AI. It is not a straight march toward machine minds. It is a long sequence of inventions, disappointments, reframings, and product discoveries. The winners were not always the most philosophically elegant systems. They were the systems that found the right compromise between intelligence, task, infrastructure, and use.

Remember these truths derived from eight decades of trial and error:

Accommodate Human Behavior: Modern neural networks won the AI wars because they adapt to the statistical reality of human behavior, rather than forcing humans to comply with rigid logic gates. Your interfaces must do the same. Do not force users to learn syntax. Infer their intent.

Manage Expectations Mercilessly: From the Perceptron in 1957 to the AI Winters, overpromising destroys user trust. Do not let anthropomorphism trick your users into believing the system possesses infallible human empathy. Design strict constraints, require citations, and provide clear system status indicators. Graceful degradation is your primary responsibility.

The Interface is the Product: OpenAI did not invent the Large Language Model. They did not invent the Transformer. But they packaged it in a conversational UI in 2022, and they changed computing forever. The underlying algorithmic intelligence of 2026 is rapidly becoming a commoditized utility. The only differentiator left in the software industry is Usability.

The history of AI has been long, it has been winding, and it leads to the future.

About the Author

Jakob Nielsen, Ph.D., is a usability pioneer with 43 years experience in UX and the Founder of UX Tigers. He founded the discount usability movement for fast and cheap iterative design, including heuristic evaluation and the 10 usability heuristics. He formulated the eponymous Jakob’s Law of the Internet User Experience. Named “the king of usability” by Internet Magazine, “the guru of Web page usability” by The New York Times, and “the next best thing to a true time machine” by USA Today.

Previously, Dr. Nielsen was a Sun Microsystems Distinguished Engineer and a Member of Research Staff at Bell Communications Research, the branch of Bell Labs owned by the Regional Bell Operating Companies. He is the author of 8 books, including the best-selling Designing Web Usability: The Practice of Simplicity (published in 22 languages), the foundational Usability Engineering (30,300 citations in Google Scholar), and the pioneering Hypertext and Hypermedia (published two years before the Web launched).

Dr. Nielsen holds 79 United States patents, mainly on making the Internet easier to use. He received the Lifetime Achievement Award for Human–Computer Interaction Practice from ACM SIGCHI and was named a “Titan of Human Factors” by the Human Factors and Ergonomics Society.

· Subscribe to Jakob’s newsletter to get the full text of new articles emailed to you as soon as they are published.

· Read: article about Jakob Nielsen’s career in UX

· Watch: Jakob Nielsen’s first 41 years in UX (8 min. video)